This study looks at the effectiveness of an automatic adaptation management system that gradually adjusts the gain and frequency response over time in order to ease the patient toward the full fitting target.

The historical attitude regarding target fitting rules and user acceptance is best encapsulated in the following quote, “The client should be encouraged to get used to the hearing aids that should be the most beneficial rather than the ones that sound the best initially.”1 Taken out of context, this approach seems to lack much clinical relevance. It is often the case that an initial fitting—full target gain that is appropriate for the wearer’s hearing loss—will lead to resistance precisely because of sound quality complaints. This effect is most likely to occur in the case of new wearers with more severe hearing losses.2

However, the quote above is not as myopic as it may seem. It was based on the philosophy that hearing aid wearers will only acclimatize to the amplification that they are using, whether it is appropriate for their hearing loss or not. The concept of acclimatization to hearing aids was first introduced by Gatehouse.3 Thus, the previous authors were stating that you can’t under-fit a new hearing aid wearer and expect them to acclimatize to appropriate amplification. Consequently, the philosophy was to fit to full target and allow acclimatization to do the rest.

In a later paper, Mueller and Powers4 describe four possible outcomes for this “You’ll get used to it” technique:

- The wearer adapts to it;

- The wearer gets enough benefit from it to put up with it without really liking it;

- The wearer is annoyed enough to become a semi-unhappy part-time wearer; and

- The wearer rejects amplification entirely.

If you’re keeping score, that is one good outcome, one fair outcome, and two really bad outcomes.

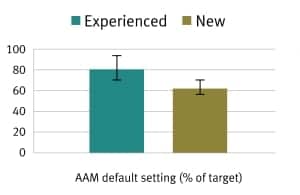

Figure 1. The mean starting points (±1 standard deviation) for the new and experienced hearing aid wearers in the group in this study. A value of 100% would equal the full fitting target.

Unfortunately, the alternative method yields potentially worse outcomes. For example, it has been noted by Schum5 that many new wearers present clinically with a preponderance of high frequency hearing loss. An appropriate fitting would apply proportionately more high frequency gain than the wearer is likely to appreciate. They will often complain that the greater high frequency gain results in a tinny or thin sound quality. This can lead to one of the three undesirable outcomes described by Mueller and Powers.4

Many clinicians and some hearing instrument manufacturers are therefore inclined to avoid the use of well-researched target gain rules, such as NAL-NL26 or DSL v5.0,7,8 in favor of providing a flatter frequency response. This flatter response may provide a sound quality that more new wearers report as “natural” compared to DSL v5.0 or NAL-NL2 fittings. However, it can easily lead over time to the complaint that the hearing aid makes things louder without making speech any clearer—a most undesirable outcome. Furthermore, without the application of more high frequency amplification, the wearer isn’t likely to acclimatize to an appropriate and efficacious fitting capable of providing the best possible speech understanding.

Technology now offers a solution to this fitting conundrum. Digital hearing aids equipped with an automatic adaptation manager allow the wearer to have it both ways. Such an adaptation manager was described in the March 2012 edition of HR.9 The premise is to initially provide the wearer with less overall gain and a flatter frequency response to promote high first-fit acceptance. Then, gradually and imperceptibly—over a period of days, weeks, or months—the hearing aid increases the gain and adjusts the frequency response until it reaches the wearer’s prescribed frequency/gain target for the selected fitting formula. In this way, acclimatization takes place gradually and comfortably over a short period of time, and the process is imperceptible to the wearer.

During the course of validating this new feature, we undertook a study to ensure that the automatic adaptation system was working as intended and that the wearers accepted the additional gain as it was applied over a 5-week period. This article summarizes the results.

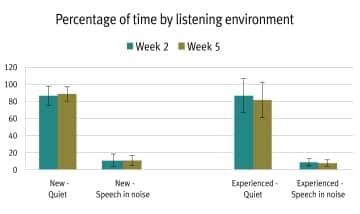

Figure 3. Percentage of time by listening environent. About 80% of the study participants’ time was spent in quiet environments.

Figure 2. Mean use time in hours per day (+ 1 Std) for both new and experienced wearers before each follow-up visit. The mean use time was about 10 hours/day.

Study Overview

A total of 36 participants—20 experienced wearers and 16 new wearers—were fitted with Unitron Quantum hearing aids. Each participant was fitted with default settings for all parameters including that of the Automatic Adaptation Manager (AAM). The AAM fits the gain model of the instrument to a setting that is some percentage of the full target gain, either calculated by NAL-NL1 or DSL v5.0. The starting point was calculated based on the wearer’s age, hearing loss, and experience with amplification. The mean starting points (±1 standard deviation) for the new and experienced hearing aid wearers in the group are shown in Figure 1. A value of 100% in Figure 1 is equal to a full target fitting.

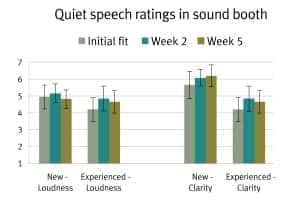

Figure 4. Quiet speech ratings of participants in the sound booth.

The participants wore their hearing aids for the next 5 weeks. During that time, the AAM gradually adjusted their gain models up toward full target at a rate of 5% every week assuming 10 hours of use each day.

The purpose of this study was to evaluate the participants’ perception of loudness and clarity during the 5-week acclimatization period. There were two follow-up visits, occurring at 2 weeks and 5 weeks post-fitting, respectively. There were no adjustments or interventions by the fitters during those visits, only data collection. The participants also were given a diary where they provided us with similar types of ratings to those obtained at the site visits. They were asked to provide ratings of loudness and clarity at the end of Week 1, Week 2, Week 4, and Week 5.

Usage data. At each follow-up visit at 2 weeks and 5 weeks, datalogging information was retrieved that showed how the hearing aids were used. Figure 2 shows the mean hours/day of use (±1 SD) for both new and experienced wearers before each follow-up visit. The mean use time was quite close to 10 hours/day.

Given that the hearing aids under test had four-destination automatic switching, we logged the amount of time spent in one of four listening environments: quiet, speech in noise, noise, and music. By percentage, the overwhelming amount of time was spent in quiet listening situations (Figure 3). Roughly another 10% of their time was spent in speech in noise situations. This left an insignificant amount of time in noise-only or music-only listening environments.

Given that over 80% of the time that the hearing aids were worn, they were used in a quiet listening environment, loudness and clarity ratings are reported for quiet listening only.

Figure 5. The Loudness and Clarity scales used by participants in this study.

Sound booth. Loudness ratings over the 5-week period for new and experienced wearers in the sound booth are shown on the left-hand side of Figure 4. Clarity ratings are shown on the right hand side of the figure. (The 7-point loudness and clarity rating scales that the participants used are shown in Figure 5.)

Notice that loudness ratings for the same speech sample presented repeatedly over time under controlled conditions in the sound booth did not change significantly over the 5-week period for new wearers even though the hearing aids were gradually increasing the gain model at a rate of 5% per week toward full target gain. The experienced wearers reported a slight jump in loudness after 2 weeks that seemed to level off again by 5 weeks.

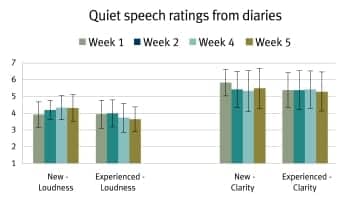

Figure 6. Real-world loudness and clarity ratings for new and experienced users at Weeks 1, 2, 4, and 5.

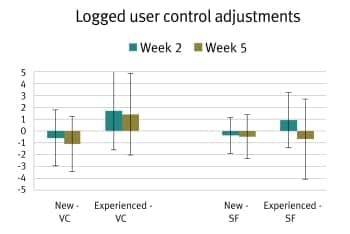

Figure 7. Mean logged changes to the volume control (VC) and SmartFocus (SF) control.

There was a trend among new users to rate the clarity of the speech higher over the 5-week period. Experienced wearers initially rated the speech clarity higher after 2 weeks. However, many of them reached full target fairly quickly as they started at a higher percentage than the new users. Thus, there is not a corresponding increase in clarity ratings after 5 weeks with the experienced group.

Take-home diaries. Loudness and clarity ratings also were obtained from both groups in their own real-world listening situations. The participants were given diaries to fill out after Weeks 1, 2, 4, and 5. They used the same loudness and clarity scales (see Figure 5), and their results are shown in Figure 6.

The mean loudness rating trend for new wearers was a slight increase over time from slightly below “Comfortable” to slightly above “Comfortable.” For experienced wearers, it was a slight reduction from “Comfortable” toward “Softer but Comfortable.” For both groups, the overall gain of the instruments was rising toward full target or staying at full target for the entire 5-week span. Mean clarity ratings for both groups stayed consistently between “Good” and “Very Good” for the full 5 weeks of the trial. These results differ somewhat from those in Figure 4; it is important to remember that the participants are rating loudness and clarity for all listening situations in the diary (Figure 6), but only for quiet listening in the sound booth (Figure 4).

There are two other potentially confounding factors with regard to the ratings for loudness and clarity provided in the take-home diaries. The volume control (VC) and the SmartFocus (SF) control settings can have a major impact on both loudness and clarity perception. To minimize the impact of these two controls, the learning features for both were disabled, ensuring that the hearing aids were always at the default settings upon start-up. But datalogging for the two features was enabled so that we could see if the wearers had made consistent adjustments over time. The assumption was that the VC or the SF (or both) would be routinely turned down if the automatic adaptation was making the hearing aids too loud for the wearers at any point over the 5-week trial. The mean logged changes to the VC and SF controls (±1 SD) are shown in Figure 7.

The mean logged changes to the VC and SF controls were minimal, typically less than 1 increment on the control. One increment on the VC = 1 dB change and one increment on the SF control equals one step up (toward clarity) or one step down (toward comfort) on a 21-step control. The relatively large standard deviation on the controls of the experienced wearers was due mostly to three super-power BTE wearers who turned the volume controls up over time rather than down.

Summary

A total of 36 hearing aid wearers (20 experienced; 16 new) were fitted with Unitron Quantum hearing aids employing an automatic adaptation manager that was set to increase the initial fitting gain model over time at a fixed rate of 5% per week. Neither new nor experienced wearers rated the hearing aids as getting louder over time. New wearers did rate the clarity of the hearing aids as gradually increasing over time when listening to quiet speech under controlled conditions in a sound treated room. Experienced wearers supplied clarity ratings that trended upward over the first 2 weeks of the trial and then remained constant as more of their fittings reached full target and stopped adapting.

The take-home diaries for both groups of participants indicated that there was no perception of increased loudness over the 5-week adaptation period and the clarity for speech was always rated between “good” and “very good.” Given that there were no substantial VC or SF control changes, it is reasonable to assume that participants had no problem adapting to the gradually increasing gain model, even at a fairly quick adaptation rate.

References

- Turner C, Humes L, Bentler R, Cox R. A review of past research on changes in hearing aid benefit over time. Ear Hear. 1996;17(3):14S-28S.

- Keidser G, O’Brien A, Carter L, McLelland M, Yeend I. Variation in preferred gain with experience for hearing-aid users. Int J Audiol. 2008;47:621-635.

- Gatehouse S. The time course and magnitude of perceptual acclimatization to frequency responses: evidence from monaural fitting of hearing aids. J Acoust Soc Am. 1992;92:1258-1268

- Mueller H, Powers T. Consideration of auditory acclimatization in the prescriptive fitting of hearing aids. Sem Hear. 2001;2:103-124.

- Schum D. Adaptation management for amplification. Sem Hear. 2001;2:173-182.

- Dillon H, Keidser G, Ching T, Flax M, Brewer S. The NAL-NL2 Prescription Procedure. Phonak Focus. 2011:40.

- Scollie S, Seewald RC, Cornelisse LE, Moodie S, Bagatto M, Moodie K. Pumford J. The Desired Sensation Level multistage input/output algorithm. Trends Amplif. 2005;9(4):159-197.

- Seewald RC, Moodie S, Scollie S, Bagatto M. The DSL method for pediatric hearing instrument fitting: historical perspective and current issues. Trends Amplif. 2005;9(4):145-157.

- Pumford J, Hayes D, Cornelisse LE. Automatic adaptation management: addressing first-fit amplification considerations. Hearing Review. 2012;19(3):34-36. Available at: www.hearingreview.com/issues/articles/2012-03_03.asp

Citation for this article:

Hayes D, Pumford J, and Cornelisse L. Evaluation of an Automatic Adaptation Manager Hearing Review. 2012;19(09):32-37.