Listening in the presence of background noise has traditionally posed the greatest challenge for hearing aid wearers and is also a primary source of dissatisfaction with hearing aids.1,2 Ideally, an intelligent hearing instrument would distinguish between speech and noise, and then amplify the desirable speech while suppressing undesirable noise in the signal. Recent developments3 in digital signal processing—including intelligent signal detection and noise reduction and switchable directional microphones—have helped to make this wish a reality.

Classifying Noise

The effectiveness of a noise reduction algorithm depends primarily on the design of the hearing aid signal detection and classification system. Some earlier noise reduction algorithms tested only one dimension of the signal to determine if a sound was a desirable signal or a noise. For example, one algorithm design categorized sound events by amplitude or intensity in each frequency region. Those sound events with the lowest amplitude were assumed to be noise. The gain for each frequency band was calculated separately to help minimize the effect of the identified noise. After applying the calculated gain in each band, the output signal was formed by recombining signals from each frequency band.

However, the incoming signal cannot be properly classified as speech or noise based only on a single dimension; sound events cannot be accurately categorized solely on the basis of their amplitudes. Furthermore, a single dimension does not take into account changing conditions in which noise is more or less prevalent at different times. For example, when listening to the radio while riding in a car, the amplitude of the noise from the car rises and falls proportionately as the car changes speed; yet the speech from the car radio remains constant. The result is that the more intense noise events, such as engine noise when the car speeds up, will not be categorized as noise, and many non-noise sound events, such as the car radio, will be categorized as noise.3

The modulation index is another dimension that can be used to categorize the incoming signal as either speech or noise.4-9 The modulation index is defined as the rate of change of the signal amplitude in each band. Speech exhibits rapid and frequent amplitude changes and, therefore, has a high modulation index. Steady state noise, on the other hand, has a low modulation index. If noise is detected in a single band, the gain of that band is reduced relative to the other non-noise bands. Thus, bands with noise are suppressed in favor of bands without noise.

Classifying noise solely on the basis of its modulation index would assume that the frequency spectrums for all noise demonstrate little or no variation over time. Such a system would be good at detecting stationary or slowly changing pseudo-stationary noise, but these characteristics do not constitute the majority of listening environments. Consequently, just as you cannot classify noise based solely on its amplitude, it may not be appropriate to classify the incoming signal as speech or noise based solely on its modulation index.

Multidimensional Signal Detection

A more effective method of signal characterization and noise reduction examines several dimensions of the signal simultaneously. The system is then able to adapt to signals with different noise content over time and, therefore, is designed to detect and suppress many different types of noise.

In a new noise reduction algorithm, ClearPath technology used in Unitron Hearing’s Conversa™, incoming signals are analyzed in 16 separate bands along 3 different dimensions rather than using a unidimensional approach. Amplification or suppression is then applied differently, and on a weighted basis to the signal based on the categorization.

Signal Analysis and Classification

Noise can generally be classified into three major categories based on its characteristics:

- Stationary noise (Example: air conditioner or motor/engine);

- Pseudo-stationary noise (Example: traffic or crowd of people);

- Transient noise (Example: hammering or door slam). An effective signal detection and noise reduction system must be able to accurately classify signals as one of these three types of noise, or as a

- Desirable signal (Example: speech or music).

.gif)

The differing characteristics of these four signal categories are illustrated in Figure 1.

The noise reduction technology used in Conversa analyzes and classifies sounds by simultaneously examining three specific characteristics inherent in the signals:

|

Using these three dimensions, the four signal types (three types of noise plus desirable signals) can be placed on the continuum scale found in Table 1.

An analysis of intensity change, modulation frequency and time is done simultaneously in each narrow frequency band to determine subindices for each of these three dimensions. These are combined to produce a signal index on a three-dimensional continuum within each frequency band. The signal in each band is classified as one of the four signal types by its three dimensional index (Figure 2). This signal index determines how much the output of that band is amplified or suppressed. Consequently, the most gain is applied to bands containing desirable signals, and less or no gain is applied to bands containing noise. This is designed to result in a reliable, accurate, adaptive signal detection system to drive the noise reduction algorithm.10

.gif)

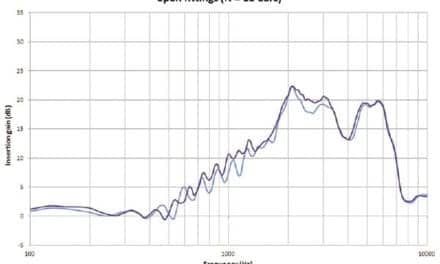

Optimization of Noise Reduction

Spectral Weighting: After reliable signal detection, the algorithm is designed to reduce unwanted background noise with minimal impact on important speech cues. A spectral weighting bias is incorporated which tempers the degree of noise suppression across different frequencies by controlling the amount of gain reduction in each channel. For example, gain reduction may be applied vigorously in the low-frequency channels, moderately in the high-frequency channels, and least aggressively in the mid-frequency channels where the most important speech cues occur. These weighting factors are illustrated in Figure 3.

.gif)

Attack and Release Times: The attack and release times need to be optimized to minimize impact on speech sounds when the noise reduction is activated. Time constants are a crucial factor in a noise reduction algorithm. If the algorithm reacts too quickly to changes in the signal index, a sudden transient burst from a brief phoneme (eg, /d/ or /t/) could be suppressed as if it were a noise and become inaudible. Conversely, when the time constants are too long, pseudo-stationary noises and transient noises would not be detected quickly enough. The gain in the frequency channels containing noise would not be reduced in time and there would be no benefit from the noise reduction algorithm. Furthermore, as the signal changes from noise to speech, the time required for the algorithm to increase the gain in each band would be too long. Therefore, important speech information may be lost.

.gif)

Figure 4 shows the effects of different time constants on a signal consisting of speech in the presence of fan noise. Note that with slower time constants (top diagram), noise reduction is less effective in removing the noise from the speech than with faster time constants(bottom diagram).

Adjustable Noise Reduction: Providing fitter adjustment for the degree of noise reduction adds further flexibility to a noise reduction system. Choices of off, moderate, or maximum for each listening program allow different settings according to the wearer’s needs or preferences in different listening environments. For example, switching from moderate to maximum noise reduction increases the amount of available gain reduction equally in each frequency band. This means that the amount of absolute gain reduction can be changed, but the spectral weighting bias will be unaffected (Figure 5).

.gif)

Summary

A reliable, accurate, adaptive signal detection and noise reduction system applies the most gain to bands containing desirable signals and less or no gain to bands containing noise. In the multidimensional signal detection and optimized noise reduction algorithm described, the actual amount of gain reduction at any frequency over a given time frame is dependent upon five factors:

- The mixture of signals and noise present in each band;

- The signal index, as defined by the multidimensional detection parameters;

- The spectral weighting bias;

- Time constants of noise reduction algorithm, and

- The degree of noise reduction applied (ie, off, moderate, or maximum).

Individuals fit with this technology consistently comment on its comfort and effectiveness in noisy settings. The multidimensional signal detection, adjustable noise reduction, and optimized attack and release times are designed to reduce unwanted background noise with minimal impact on important speech cues. When coupled with directional microphone technology, this noise reduction system can provide an effective solution for listening to speech in noise, increasing overall user satisfaction with their hearing aids.

| This article was submitted to HR by Nancy Tellier, MSc, corporate audiologist; Horst Arndt, PhD., senior technical advisor; and Henry Luo, PhD, manager of DSP application, at Unitron Hearing, Kitchener, Ontario. Correspondence can be addressed to Nancy Tellier, Unitron Hearing, 20 Beasley Drive, Kitchener, ON N2G 4X1, Canada; email: [email protected]. |

References

1. Kochkin S. MarkeTrak VI: 10-year customer satisfaction trends in the US hearing instrument market. Hearing Review. 2002;9(10):14-25,46.

2. Kochkin S. MarkeTrak VI: Consumers rate improvements sought in hearing instruments. Hearing Review. 2002;9(11):18-22.

3. Graupe D, et al., inventors. Method and Means for Adaptively Filtering Near-Stationary Noise from an Information Bearing Signal. US Patent 4 185 168. Jan. 22, 1980.

4. Bray V, Nilssen MJ. Objective test results support benefits of a DSP noise reduction system. Hearing Review. 2000;7(11):60-65.

5. Edwards B, Hou Z, Struck CJ, Dharan P. Signal-processing algorithms for a new software-based, digital hearing device. Hear Jour. 1998;51(9):44.

6. Lurquin P, Delacressonniere C, May A. Examination of a multi-band noise cancellation system. Hearing Review. 2001;8(1):48-54,60.

7. Powers TA. Benefits of DSP: A Review. Hearing Review. 2000. 7(10):62-67.

8. Powers TA. The use of digital features to combat background noise. In: Kochkin S, Strom KE, eds. High Performance Hearing Solutions, Vol. 3. Hearing Review. 1999. [Suppl] 6(1): 36-39.

9. Smriga D. Problem solving through "smart" digital technology. Hearing Review. 1999;6(1), 58-60.

10. Luo H, Arndt H, inventors. Apparatus and Method for Adaptive Signal Characterization and Noise Reduction in Hearing Aids and Other Audio Devices. US Patent Application Publication US 2002/0191804 A1, Dec. 19, 2002.

.gif)