| This article was submitted to HR by Arthur Schaub, Dipl Phys, an innovation and technology executive at Bernafon AG, Bern, Switzerland. Schaub, who has a degree in physics, is also the author of the book, Digital Hearing Aids, published last year by Thieme Publishers (www.thieme.com). Correspondence can be addressed to HR at [email protected] or Arthur Schaub at . |

|

The basic principle of wide dynamic range compression (WDRC) lies in mapping all of the low to high sound pressure levels (SPL) into the restricted range that is both audible and comfortable for people with hearing impairment. In this way, a hearing instrument makes soft sounds audible again, a prerequisite for restoring speech intelligibility and, at the same time, prevents emitting too-loud sounds. The basic principle of WDRC implies three necessary actions:

- Continuously measuring the SPL of the incoming signal;

- Determining the gain that is appropriate to the constantly changing SPL;

- Applying the time-varying gain to the acoustic signal.

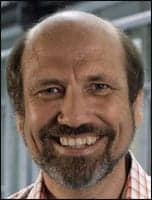

The architecture of Bernafon’s ChannelFree processing system is shown in the block diagram of Figure 1. The SPL Measurement block measures the SPL of the acoustic input signal and feeds the measured value to the Filter Control. The Filter Control determines the appropriate gain and adjusts the Controllable Filter in such a way that, at any point in time, the Filter amplifies the acoustic signal as required by its SPL. Finally, the Synchronization keeps the gain and the acoustic signal time-aligned.

This last action extends the basic WDRC processing steps. Its aim is to compensate for the lag between the acoustic signal and the gain applied to it.

|

| FIGURE 1. Block diagram of Bernafon’s ChannelFree processing system. |

Features and Advantages

ChannelFree is unique due to a set of four features:

Instantaneous response to sound events. This denotes a precise reaction exactly at the point in time when sound onsets and offsets occur. This feature is the result of a concerted action by all four processing blocks: acquisition of a distortion-free SPL value within milliseconds, swift adjustments to the amplification, and precise time alignment of the acoustic signal and the gain applied to it. This instantaneous response is in contrast to compression systems with time constants ranging from several tens to hundreds of milliseconds.

Use of wideband SPL. As shown in Figure 1, the SPL Measurement block processes the entire acoustic input signal. The measured wideband SPL value then propagates to the Filter Control and hence determines the amount of gain that the Controllable Filter applies to the acoustic signal. Use of the wideband SPL is in contrast to the conventional multichannel approach with compression in separate frequency channels.

Measuring and filtering as parallel processes. The SPL Measurement and the Controllable Filter reside on parallel signal paths and therefore proceed simultaneously. This implementation as parallel processes is in contrast to conventional approaches where filtering and level measurement occur one after another.

Continuous frequency response and filter adjustments. This feature results from the interaction of the Filter Control and the Controllable Filter. At any point in time, the Controllable Filter applies gain as a continuous function across frequency, and the Filter Control adjusts this frequency response continuously over time. Continuous frequency response and filter adjustments are in contrast to conventional approaches that split the acoustic signal into either frequency bands or time segments, and thus process the acoustic signal in a discontinuous way.

So far, we have looked at technical and processing issues. But how do these features translate into benefits?

Compensating for the Missing Hearing Mechanism: Revisiting Moore et al

As set out earlier, WDRC aims at compensating for increased hearing thresholds and abnormal loudness growth in the impaired ear. According to Moore et al,1 these manifestations are symptoms of a fast-acting compressive mechanism that operates in the healthy ear, but is lost in the impaired ear.

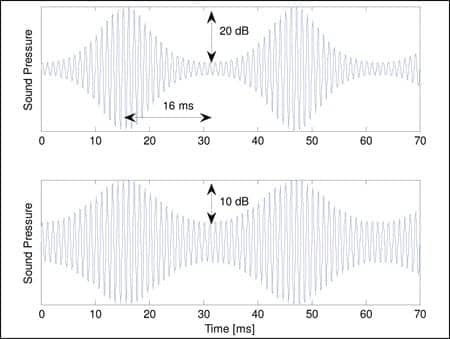

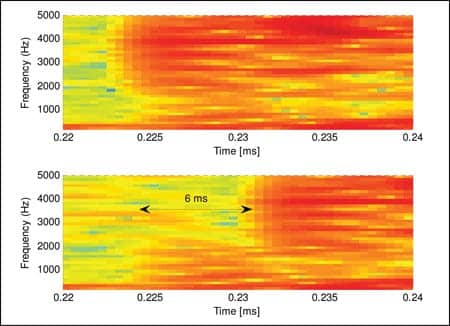

In their 1996 experiment, Moore et al1 investigated the effect of loudness recruitment on the perception of amplitude modulation. They used test signals with a modulation frequency of up to 32 Hz and a modulation depth of up to 20 dB (Figure 2). The intent was to find the modulation depths required for people to perceive the same loudness with their healthy and with their impaired ear. A key finding was that, with their impaired ears, the test participants required less modulation depth. Moore and colleagues concluded: “The results are consistent with the idea that loudness recruitment results from the loss of a fast-acting compressive nonlinearity that operates in the normal peripheral auditory system.”1

With regard to compensating for the lost mechanism, they state, “If the goal was to restore the dynamic aspects of loudness perception to normal … our data indicate that the compression would need to operate effectively for modulation rates up to at least 32 Hz and for modulation depths up to at least a 20-dB peak-to-valley ratio. In practice this is difficult to achieve.” And even without aiming for loudness normalization, they affirm, “However, even if the goal is a more modest one, to restore audibility of weak sounds while preventing intense sounds from becoming uncomfortably loud, the compression would still need to be fast acting to ensure that weak sounds are audible when they occur just after strong sounds.”1

|

| FIGURE 2. Moore et al1 used signals with a modulation frequency of up to 32 Hz and a modulation depth of up to 20 dB (top). However, with an impaired ear, the test participants required less modulation depth (bottom). |

At this point, it is worthwhile to examine the test signals. At a 32-Hz modulation rate, loud and soft portions alternate approximately every 16 ms, as indicated in the top panel of Figure 2. This duration and the 20 dB level difference are representative of the shortest soft consonants surrounded by loud vowels in running speech. Hence, the conclusions extend from the test signals to speech in our everyday acoustic environments.

With respect to hearing instruments, the researchers note that “most commercially available hearing aids with ‘fast-acting compression’ have release times in the range 50 to 200 ms. For such release times, the compression would not be very effective for modulation rates above 5 to 10 Hz. … For example, [for an AGC system] with an attack time of 2 ms, a release time of 20 ms, and a compression ratio of 2, the effective compression ratio drops to less than 1.5 with a modulation depth of 20 dB and a modulation rate of 30 Hz.” Shorter time constants bear the risk of introducing distortions.

Similarly, ChannelFree had to overcome several kinds of distortion:

- Simply reducing the time constants in SPL measurement causes distortion, because the oscillations in the acoustic waveform produce “ripple” in the measured SPL value. This ripple propagates to the amplification and thus distorts the acoustic signal. Therefore, ChannelFree uses a sophisticated algorithm to suppress ripple, while still tracking the level difference of consecutive phonemes in speech.2

- Rapidly varying the amplification causes distortion when there is a time lag between the acoustic signal and the gain applied. The output signal then overshoots and undershoots repeatedly. ChannelFree therefore time-aligns the acoustic signal and the gain applied.

- Swiftly varying the amplification also causes distortion when applied in separate compression channels. Multichannel compression (especially fast-acting compression) systematically flattens the spectrum of any sound it processes. ChannelFree avoids multichannel compression and instead preserves spectral contrast by using the wideband SPL (see the following section).

As a result, ChannelFree incorporates several processing novelties to produce compression at 2 ms attack and 10 ms release time, while avoiding distortion.

Wideband SPL for Preserving Spectral Contrast and Speech Cues

Spectral contrast refers to the peak-to-trough ratio in spectral envelopes of speech phonemes. The spectral envelope of each phoneme has a characteristic shape, and the spectral contrast is required for listeners to tell different phonemes apart.

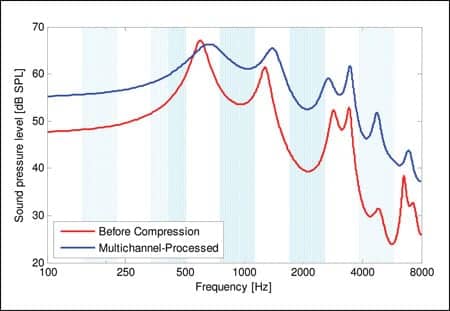

Multichannel compression splits the acoustic signal into partial signals in separate frequency bands, measures a separate level for each band signal, and compresses each band signal according to its band level. In doing so, multichannel compression systematically applies more gain to the troughs than to the peaks, thus filling in the troughs and flattening the spectra.

Figure 3 shows the spectral envelope of the vowel /u/ as in the word “understand,” both before (red) and after multichannel compression (blue). The blue envelope shows heavily filled-in troughs around 1 kHz and 2 kHz. This effect results from compression in multiple channels, which are indicated in Figure 3 as a pattern of white and light blue stripes.

|

| FIGURE 3. Spectral envelope of vowel /u/, before and after multichannel compression. Multichannel compression applies more gain to the troughs than the peaks, essentially “filling in” or flattening the spectra. |

In a recent study, Bor et al3 investigated this trough-filling effect on vowels. They applied multichannel compression in up to 16 channels, with an attack time of 3 ms, and a release time of 50 ms. The outcome suggested that “Overall spectral contrasts of vowels are significantly reduced as the number of compression channels increases.”3 Next, the researchers investigated the effect on hearing-impaired listeners. As expected, the listeners had more difficulty in telling the different vowels apart. Bor and colleagues observed, “Listeners with mild sloping to moderately severe hearing loss demonstrated poorer vowel identification scores with decreasing spectral contrast.”3

Unfortunately, the study excludes consonants, which are perhaps even more crucial for speech understanding. However, multichannel compression tends to flatten the spectrum of any sound. Therefore, multichannel compression is likely to degrade consonant identification as well.

Unlike multichannel compression, ChannelFree refrains from measuring levels of partial signals in separate frequency bands. Instead, it measures the wideband SPL value, operates on the wideband acoustic signal, and thus preserves the spectral contrast inherent in the signal.

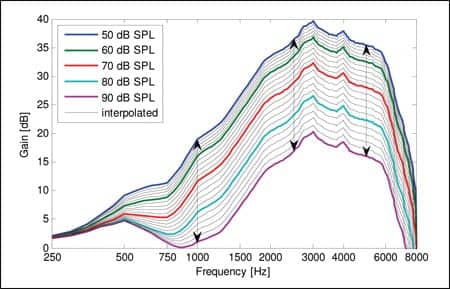

At this point, however, it is important to avoid a potential misunderstanding: ChannelFree is completely different from the outdated single-channel wideband compression scheme that produces the frequency responses illustrated in Figure 4. These frequency responses have the same shape, irrespective of input level. Decades ago, 2-channel compression was the first step to overcome the inflexibility of single-channel wideband compression, introducing different compression ratios in the lower and higher frequencies. Later, multichannel systems further increased flexibility.

|

| FIGURE 4. Gain shaping of single-channel wideband compression. The equal arrow lengths indicate a constant compression ratio across frequency. |

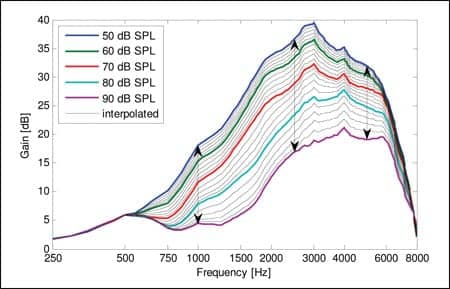

ChannelFree goes even further: the Controllable Filter directly implements continuous frequency responses that coincide with the fitting targets to within 1 dB, thus providing a continuously variable compression ratio across frequency (Figure 5).

|

| FIGURE 5. Gain shaping of ChannelFree compression. The different arrow lengths indicate variable compression across frequency. |

Minimizing Delay for Low Distortion

We use the term throughput delay to denote the time span between two events: 1) the moment when the microphone picks up a sound, and 2) the moment when the receiver emits the same amplified sound. This delay is most significant in open fittings and hearing aids with large vents, where sound from the free field enters the ear canal and interferes with the amplified and delayed sound from the hearing instrument.

For example, in Figure 6, the top panel shows the spectrogram of an acoustic signal, and the bottom panel shows the spectrogram of the same signal as it arrives in the ear canal. In this example, sound from the free field dominates below 1.5 kHz, whereas amplified sound dominates above it. As the spectrogram shows, the amplified signal lags the direct sound by 6 ms.

|

| FIGURE 6. Effect of open fittings to the arrival of sound in the ear canal, open versus aided sound. Note the 6 ms time delay. |

This mixture of sounds gives rise to several undesired perceptual effects: echo, sound coloration, rapid frequency glides, and sounds that appear smeared in time, as Stone et al4 describe the phenomena. In their experiments, they investigated the level of disturbance to open fittings, using a scale from 1 to 7, with 1 being “not at all disturbing,” 4 being “disturbing,” and 7 being “highly disturbing.” With gain settings for typical mild-to-moderate high frequency losses, they found that “disturbance increased significantly with increasing delay: the main increase occurred between 4 and 12 ms.”4 Stone and colleagues also note that a disturbance rating of 3 was reached for a delay of about 5.3 ms. Such a delay may seem only moderately constraining, but the delay due to WDRC is just one source. Other processing steps add to the total delay—including the inevitable analog-to-digital (A-D) conversion.

Conventional WDRC schemes tend to have long throughput delays because they usually exhibit serial processing. Schemes with band-splitting, for example, first produce the band signals, then measure their levels, and then compress. The delays in these consecutive operations add up. Schemes with segmentation in time also have consecutive operations: accumulation of signal samples into a buffer, transformation to a spectrum, level measurement, modification of the spectrum, and transformation back into a waveform.

In contrast, ChannelFree exhibits parallel processing; SPL measurement and amplification proceed simultaneously. As a result, it adds only 2.5 ms to the total throughput delay, including the time-alignment of the acoustic signal and the gain applied to it.

Prioritizing Resolution

Frequency resolution. Frequency resolution is important to achieve a good match to fitting targets, and also to adjust gain during a fine-tuning session. For this purpose, frequency resolution should allow independent gain settings at the audiometric frequencies from 250 Hz up to 8 kHz. In practice, frequency resolution should also allow compensation for nonideal components in a hearing aid (eg, for the peak that a receiver imposes on the gain curve). For this purpose, a finer resolution (eg, in third-octave bands) is necessary.

Conventional WDRC schemes split the acoustic signal into partial signals and then process the signals in separate frequency bands. In doing so, they introduce a discontinuity: a finite set of gain values, one distinct value per frequency band. To compensate for this discontinuity, conventional systems use overlapping filters. Additionally, to reduce the loss of spectral contrast, they combine signal levels acquired from separate band signals, a countermeasure known as channel coupling. Both countermeasures reduce the frequency resolution to less than what the number of frequency bands suggests. In contrast, ChannelFree needs no such countermeasures; it prioritizes frequency resolution and maintains it throughout.

Temporal resolution. As described at the beginning of this article, temporal resolution is a novel feature of ChannelFree. Some conventional WDRC schemes, however, are less favorable, especially those that split the acoustic signal into time segments and then process consecutive segments separately. These systems determine level and gain just once per segment. In doing so, they restrict the temporal resolution to the segment duration and introduce the discontinuity of a gain function that changes in steps. In this case, overlapping time segments are used to mitigate the drawback. In contrast, ChannelFree requires no countermeasure, but prioritizes and maintains temporal resolution, too.

Verification of Sound Quality

For more than a decade, hearing loss has been recognized as the loss of a fast-acting compression mechanism.1 Likewise, existing technology has been recognized as being slow for effective compensation. ChannelFree has been designed to tackle this problem, offering effective compression for consecutive phonemes in speech, while taking the necessary steps to preserve sound quality.

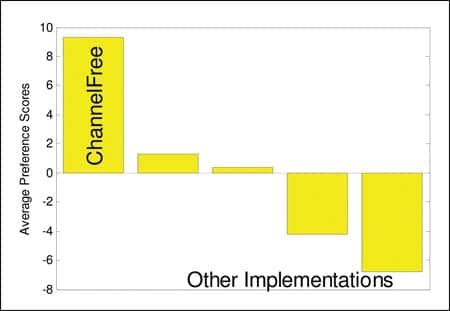

Arguing the concepts and rationale behind a system is good and necessary, but verification is better. Therefore, an early ChannelFree system—known as Symbio—was submitted to a rigorous test. Dillon et al5 compared the perceived sound quality of several advanced hearing instruments for a range of different acoustic signals listened to by normal-hearing and hearing-impaired people. For this review, I focus on the speech and music perception by hearing-impaired listeners. Figure 7 depicts the preference scores of ChannelFree compared to other implementations. The researchers comment, “For the hearing-impaired listeners, Symbio received the highest average scores for male and female voices and piano music.”5

|

| FIGURE 7. Sound quality scores for speech and music. |

Currently, new ChannelFree hearing instruments are emerging from Bernafon in a new refined technology and combined with sophisticated adaptive features.

References

- Moore BCJ, Wojtczak M, Vickers DA. Effect of loudness recruitment on the perception of amplitude modulation. J Acoust Soc Am. 1996;100:481-489.

- Schaub A. Digital Hearing Aids. New York: Thieme Medical Publishers; 2008. Available at: www.thieme.com/SID2497544392726/productsubpages/pubid1007962669.html.

- Bor S, Souza P, Wright R. Multichannel compression: effects of reduced spectral contrast on vowel identification. J Sp Lang Hear Res. 2008;51:1315-27.

- Stone MA, Moore BCJ, Meisenbacher K, Derleth RP. Tolerable hearing-aid delays. V. Estimation of limits for open canal fittings. Ear Hear. 2008;29:601-617.

- Dillon H, Keidser G, O’Brien A, Silberstein H. Sound quality comparisons of advanced hearing aids. Hear Jour. 2003;56(4):30-40.

Citation for this article:

Schaub A. Enhancing Temporal Resolution and Sound Quality: A Novel Approach to Compression Hearing Review. 2009;16(8):28-33.