The ANSI S3.221 hearing aid standard has been the defining document for hearing instrument performance parameters in the United States since 1977. However, not too much is heard about this standard anymore. Is it still in use? Is this standard still applicable and relevant? The answer to both questions is “yes.” This article is designed to bring hearing care professionals up to date on the ANSI S3.22 standard, the differences between the old (1996) and new (2003) standards, and factors one might consider when using this important test.

ANSI S3.22: What’s It Used For?

The US Food and Drug Administration (FDA) at present uses the 1996 version of the standard as the labeling document for hearing aids. Likewise, hearing instrument manufacturers use the ANSI standard to define terms and performance parameters. Essentially, the standard provides a common set of definitions and tests that allows agreement throughout the industry.

For example, the FDA can use the standardized tests to determine if a particular manufacturer is building its products according to specification. If a tested hearing aid does not meet its published specification, it can be forced to be withdrawn from the market or the offending manufacturer can be reprimanded in some way.

In past years, dispensing professionals also used the standard to determine the performance characteristics of particular hearing aids. This should remain an important function of the test. Different hearing aid types can be compared according to the ANSI-derived labeling. The end result: the standard provides a level playing field with which to test hearing instruments produced by manufacturers and to help dispensing professionals verify that any given hearing aid performs to specification.

Revising ANSI Standards for Today’s Hearing Instruments

The ANSI S3/48 working group’s main task is to keep the hearing instrument standard up to date. This voluntary group of hearing professionals, scientists, and engineers usually meets twice a year in conjunction with American Academy of Audiology (AAA) or Acoustical Society of America (ASA) meetings. It revises the S3.22 standard as needed so that the standard reflects contemporary technology.

The latest version of the standard was published in 2003 and is therefore labeled S3.22-2003. An attempt is made to publish a revision every 5 years. Unfortunately, for several reasons, this renewal typically doesn’t happen on time. The previous standard was released in 1996. The version previous to that was published in 1987.

In fairness, much work is needed to get a new version of a standard published. Once new tests and new descriptive text are incorporated into a standard, it is sent to the S3 parent committee at ASA where it receives comments from a number of parties. These are sent back to the working group for problem resolution. When the document is finally in a form with which everyone agrees, it is published. But that’s just the start of actually enacting the standard…

FDA Approval of the New Standard

Additional time is needed before the standard is placed into general use. After a revision is published, the FDA reviews the standard and publishes a statement of approval in The Federal Register. This process can take anywhere from months to years. When the new standard is finally acknowledged, the industry is given a period of time (typically 6 months) in which to start to use the standard for publication of hearing aid data.

The older version remains in effect until the newer version receives FDA approval. The present 2003 standard is in this state. The FDA has not officially accepted this standard as the “new labeling document” and, therefore, hearing aids are still being tested according to the 1996 standard.

When will the new labeling standard be approved? No one in a position of authority has been willing to make a guess.

Industry Changes = Standard Changes?

It would be an understatement to say that hearing aid technology has seen many advances since the first S3.22 standard was published in 1977. At that time, all hearing aids used analog circuits, and a select few of these devices featured compression or automatic gain control (AGC). Digital control of analog hearing aids appeared on the scene in the 1980s (ie, programmability with digital control over analog circuits), and completely digital hearing instruments were introduced in the mid-1990s.

Many digital hearing aids produced today do not provide manual volume controls; gain is often controlled by an automatic program. Digital aids are now produced that, according to the manufacturers, “think for themselves” and make adjustments to a wide variety of parameters, including frequency response, directionality and the directional polar pattern, and provide noise reduction. As the reader is aware, every new issue of each industry publication (including this one) contains several announcements of a new operational advantage.

Keeping Pace with “Digital” and Other Changes

Has the ANSI S3.22 standard kept pace with the hearing aid industry? Some changes have been made in tests and wording, but the term “digital” does not appear in either the 1996 or the 2003 versions of the standard. The word “digital” may have been left out of the new standard; however, the standard’s structure has been changed to accommodate some digital realities.

The first goal of the standard is to check to see if the main components are functional: microphone, receiver, and circuit module. These tests are done with the AGC functions disabled or reduced. Although the use of reference test gain (RTG) has been continued, its significance is reduced (to be discussed later). It is understood that a digital product will probably not be operated with AGC functions disabled when placed on a user’s ear. After most functions are tested, AGC is enabled and tests are done to determine if the static and dynamic AGC functions are operable.

There are other aspects of the standard that may appear to be problematic to the experienced dispensing professional. However, most of the new standard employs good reasoning for keeping or modifying the parameters of the test.

Removal of the volume control and its affect on how the ANSI test operates. The removal of the VC may complicate the way that tests are performed at the manufacturers’ facilities and in the dispensers’ offices. The programmer device—typically a stand-alone “programming box” or a computer installed with the manufacturer’s programming software—is used for adjustment of circuit parameters, including the volume or gain control. Frequently, the programming device is used to supply power to the tested and programmed hearing aid. A hearing aid current drain measurement is therefore not possible by the analyzer unless the programmer is replaced with the analyzer’s battery replacement connection during those measurements that include battery drain.

Test Signals. All acoustic tests listed in the various versions of the S3.22 standard use pure-tone signals. Although pure-tone signals are not typically representative of what hearing aids are expected to amplify, this signal type is used because professional engineers agree more closely on what to expect when analysis is done. When complex signals are analyzed, the answers obtained are dependent on the method of analysis. With a pure-tone, it is more likely that all forms of analyzers will give the same answer.

Gain, MPO, and Distortion. It is useful to remember that the basic test parameters for a hearing aid are gain, maximum power output, and distortion. Other parameters are also specified in the standard, including noise level, battery drain, telecoil sensitivity, and dynamic and static AGC parameters. All of these tests are frequency dependent.

Gain tells us how much a soft sound will be amplified. Loud sounds drive maximum power output which must also be tailored to the individual user. Too little power will not allow the user to hear what he/she needs; too much can be uncomfortable or even dangerous to the individual’s hearing health. Distortion is important and relates to sound quality and speech understanding, especially in noisy situations.

1996 vs 2003 Standards

The 1996 version of the standard was written at a time (1994-95) when the digital hearing aid had not yet been introduced in the hearing healthcare marketplace. Digital control of analog circuits was being used, but analog operation parameters have been covered in the standard since the original version was written. The 1996 version will continue to be used until FDA approves the 2003 version. Although a number of differences exist (Table 1), a comparison of test parameters from the two standards show that they are very similar.

|

| TABLE 1. The major differences between the ANSI S3.22-1996 and the ANSI S3.22-2003 tests. |

Automatic gain control (AGC) tests versus reference test gain (RTG). The two tests of full-on gain and maximum output are run with the gain full on and the frequency response set to its broadest level. In the older (1996) standard, the AGC parameters were defined by the manufacturer. In the 2003 version, AGC and expansion actions are turned off, if possible. All other tests are run at a gain control setting called reference test gain (RTG). When the AGC parameters are tested, the AGC and expansion functions are adjusted for maximum effect in the 2003 version. AGC tests are then run at one or more of a set of five frequencies selected by the manufacturer.

For both standards, reference test gain adjustment requires the operator to use the hearing aid programming device if the product does not have a manual gain control. The same goes for the adjustment of the AGC parameters required in the 2003 version. If the programming device also powers the hearing aid, its use precludes the connection of an equivalent battery source from the analyzer that would allow the measurement of battery current. It is possible to disconnect the programming cable and insert the external battery source during RTG tests, but connection is again required for reprogramming the aid when AGC tests are performed.

The 1996 version reflects the older philosophy of adjustment of the aid to typical user settings. In the 2003 version, the viewpoint is slightly different; information from these tests indicate whether the input and output transducers are operating properly, and whether the processing circuit is functional. The tests at RTG give an idea of the unit’s operation when it is adjusted to work on an actual person. The AGC tests demonstrate that the static and dynamic AGC parameters are working as designed.

In the 2003 version, these tests are modified from the older standards in that the AGC functions are basically disabled during all tests except AGC parameter tests. This action is done in order to stress the output transducer to maximum performance. Taking out the AGC action also reduces response curve distortions known as “blooming”2 that occur in AGC hearing aids when tested with pure-tones.

Testing Requirements for 1996 and 2003 Versions: An Example

When a hearing aid is tested to a version of the ANSI standard, setup information must be obtained from the manufacturer in order to be able to duplicate specification data. With most digital products, the information will be in the form of hearing instrument program settings entered by computer or via the proprietary programmer.

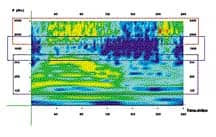

Examples of test runs on a digital aid are shown in Figure 1a-b. The aid in this example was the type VQ720 (PAC) manufactured by SeboTek. The aid consists of a sound processor worn above the pinna and a receiver fit deep within the ear canal, with the two connected by a thin wire. The receiver was mounted to a modified HA-1 coupler that was equipped with a special adapter made for this type of hearing aid.

|

|

| FIGURE 1a-b. ANSI S3.22-1996 test results (top) versus ANSI S3.22-2003 test results (bottom) for a digital hearing instrument. |

The hearing aid has directional features, but these were disabled for the test. The front microphone of the hearing aid was positioned at the reference point in the sound chamber for testing as shown in Figure 2.

Programming of the aid was done through a computer with the SeboTek Pro-VES program (v4.5) working through a HiPro hearing aid programmer. The hearing aid has 4 memories for storage of operating parameters. In the tests for the 1996 version of the standard, two of the four memories were used. Memory 3 was used as the general test parameter set; Memory 2 was used for telecoil testing. Memory 3 initially was adjusted to have the master gain set to 40 dB SPL, or full on. It was changed to 36 dB SPL to reach to within 1 dB of the reference test gain target. The gain in Memory 2 was also changed to 36 dB SPL when telecoil tests were run.

The complete listing of program parameters is shown in Figure 3. Personnel at the SeboTek factory assisted in obtaining programming information for the ANSI tests. The version 4.5 software program includes a special setup for the 1996 version of the S3.22 ANSI standard, and these parameters are different from the setup necessary for the 2003 version. Figure 3 provides a complete listing of the programming parameters for the ANSI 1996 version of the S3.22 standard, as supplied by the SeboTek program.

|

| FIGURE 3. Program parameter listing for the ANSI S3.22-1996 relative to the digital aid (supplied by manufacturer). |

|

| FIGURE 4. Program parameter listing for the ANSI S3.22-2003 relative to the digital aid (supplied by manufacturer). |

In the 2003 version of the standard (Figure 4), an additional memory was put into service. Memory 1 was adjusted to the parameters needed for AGC measurements. Memories 2 and 3 were used in the same was as they were used in the 1996 version, except that the settings were altered to conform to the requirements of the 2003 standard.

The analyzer used was a FONIX 7000. This analyzer is capable of running both versions of the ANSI standard. In both tests, the aid type was set to AGC, and 2000 Hz was chosen as the test frequency for the dynamic and static AGC tests. No adjustments were made to the automatically selected delay parameters for running either set of tests.

Results and Discussion

Differences can be seen in the results of the two test standards (Figures 1a-b). These changes are basically caused by the difference in the programmed compression and expansion settings to adjust the AGC parameters. In the case of the 1996 standard, the manufacturer’s settings were used, except for reference test gain (RTG). In the case of the 2003 test, compression was disabled in Memory 3 by setting compression ratios to 1:1, and setting expansion to 0. For AGC tests, Memory 1 was set to 30 dB SPL thresholds in all bands, and the expansion threshold was increased to 58 dB SPL. The compression ratio was increased to 4:1 (it was possible to use an infinite ratio, which is what the specification would suggest, but this was not employed). Again, RTG was achieved at a master gain setting of 36 dB SPL.

Expansion action showed up in the 1996 version by the decrease in the equivalent input noise measurement. In the 2003 standard, expansion is disallowed, as it is viewed as an “AGC function,” which is to be disabled during non-AGC tests.

AGC parameter measures, however, were significantly affected by the 2003 requirement to maximize the AGC affect when AGC parameters are measured. The measured AGC data is not reasonable because it is highly improbable that a compression threshold of 30 dB SPL would be combined with a compression ratio of 4:1 (or higher, if the standard were to be followed exactly). The expansion threshold of 58 dB SPL is likewise inconsistent with a compression threshold of 30 dB SPL. Battery current was not measured.

Additional Manufacturer’s Data

It should be noted that SeboTek also publishes an alternate set of gain and output numbers that are significantly higher than those published for the labeling standard. This set is based on the levels expected to result from the deep insertion of the receiver into the ear canal of a patient (ie, a reduction of the aided ear canal cavity volume). It is to be remembered that these numbers are approximations of performance in an actual ear, but may be closer to reality than what the S3.22 standard measures provide.

Other Operational Parameters

Does the standard cover all significant operating parameters of a digital aid? No. In the author’s opinion, it would be beneficial if tests covering the following three characteristics were included in the next version of the standard:

Directionality. While the exact measure of directional performance is not within the scope of the S3.22 standard, enough quality problems have been observed in digital hearing aid production to make desirable the addition of a simple test of directional function. The standard does give instructions for the mounting of a directional hearing aid in a sound environment.

Processing Delay. The digital process has brought in another characteristic called processing or group delay.3-5 This delay is introduced because of the inherent nature of digital products. It takes time to process numbers, which is the function of a digital processor. Some programming methods (algorithms) require longer processing delays than others. This delay can have a significant impact on sound quality and sometimes on the usability of the device.3-5

Phase. Most hearing instruments fit today are binaural. Matching of the aids is very important, and it is usually recommended that identical types be used for each ear. Using identical types in each ear yields a better chance of matching processing delay. But, especially for custom in-the-ear (ITE) and in-the-canal (ITC) products, a phase test should also be done to ensure that the receivers of the two aids are wired in the same way. Inversion of the wiring of the receiver in one ear with respect to that in the other ear will destroy any possible stereo effect benefit.3,5

Special Functions. As digital signal processing strategies progress and continue to become more complex, it will be impossible to include tests that cover all of the specialized functions that have been added to hearing aids in the past several years. It should be realized that these specialized functions need to be considered “experimental.” In the long run, it may be found that some of these functions do not provide any real benefit to the user. Others that survive and become a part of the standard hearing aid design protocol (eg, possibly directional microphones) will need to be added to the ANSI standard in the future.

At present, however, if a manufacturer includes functions not listed in the ANSI standard, the FDA requires that the manufacturer have files on hand that document the basis for the claimed functions. Automatic mode switching, open-ear feedback reduction, noise reduction, and speech recognition are among special features that fall into this category. Deep-canal insertion may also be included.

Summary and Conclusion

We presently use the 1996 version of the hearing aid standard for labeling of hearing aids. This will change when the FDA officially recognizes the 2003 standard in The Federal Register. Although the newer standard addresses some issues that the digital world has created, other additions and changes are needed.

Whether the 1996 version or the 2003 version is used to characterize the performance of a hearing aid, there is a good deal of similarity in the basic data that is obtained from the two standards. Some areas are not in good agreement, such as the performance of AGC actions. It is expected that these areas of operation will be resolved by manufacturers before or shortly after labeling is switched to the newer standard. It is also possible that the 1996 standard will still be allowed for labeling of hearing aids designed and made available to the public before the date that the 2003 standard is approved, whenever that occurs.

The present system of standard approval has a major problem: If a new facility wishes to start to test according to the presently approved labeling standard, it is not possible to legally purchase a copy of that standard. Only the 2003 version is now published.

The ANSI S3.22 standard will never be capable of defining all possible operational parameters of hearing aids. It does, however, give the dispensing professional knowledge that the physical structure of the device is functional and operating. It thus continues to be a valuable verification test. w

Acknowledgement

The author thanks David Hotvet, principal engineer at SeboTek Hearing Systems, Tulsa, Okla, for his assistance in providing information about the digital hearing instrument used as the example in this article.

|

Correspondence can be addressed to HR or George J. Frye, Frye Electronics Inc, PO Box 23391, Tigard, OR 97281; email: [email protected].

References

1. American National Standards Institute (ANSI). ANSI S3.22-2003- Specification of Hearing Aid Characteristics. Melville, NY: Acoustical Society of America; 2003.

2. Frye GJ. High-speed real-time hearing aid analysis. Hear Jour. 1986;39(6):21-26.

3. Frye GJ. Testing digital and analog hearing instruments: Processing time delays and phase measurements. The Hearing Review. 2001;8(10):34-40.

4. Van Vliet D.“It was a dark and stormy night…” Hear Jour. 2002;55(6),72

5. Schweitzer C. Clinical Significance of Digital Delay. Paper presented at: American Academy of Audiology 14th annual convention (Session IC139); Philadelphia, Pa; April 18-20, 2002.