September 12, 2007

A Purdue University researcher is working on a new technique to diagnose hearing loss in a way that more accurately reflects real-world situations.

"The traditional way to assess speech understanding in people with hearing loss is to put them in a quiet room and ask them to repeat words produced by one person they can’t see," said Karen Iler Kirk, a professor of speech, language and hearing sciences.

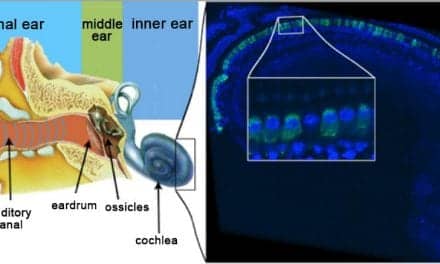

Kirk says the goal of the research is to develop new tests that reflect more natural listening situations with visual cues, different background noises, voice quality, dialects and speaking rates. She explains this method more accurately predicts how people perceive speech in the real world and can help determine appropriate therapy and interventions, such as cochlear implants.

"The better the diagnostic tool we have to make such decisions, the better we can serve our patients," she says.

Kirk received a $2.8 million grant from the National Institute on Deafness and Other Communication Disorders for the five-year project to develop two new audiovisual and multi-talker sentence tests that expand upon the traditional spoken word recognition format that has been used since the 1950s. One test is for adults and the other for children. More than 1,000 people ages 4 to 65 will participate in the study.

"The traditional spoken word recognition format has been used to determine the need for some sensory aids, such as hearing aids, which are used to amplify sound," Kirk says. "However, it is not the best method for assessing the benefits of other sensory aids, such as the more expensive cochlear implants."

More than 100,000 people worldwide have received cochlear implants, Kirk says, adding that more health insurance companies are paying for the surgery and therapy.

This project also is expanding word lists from the traditional monosyllabic words to a greater range of words based on how often they are used and lexical density – the number of words phonetically similar to the target. For example, the word "cat" has a number of lexical neighbors such as "bat," "cap," "cut" and "scat." A word like "banana" may be used frequently but has few words that sound similar.

The 10 diverse speakers, who are recording more than 6,000 sentences combined, will not be producing perfectly articulated speech.

"It’s important to use sentence materials that are produced by different speakers because in the real world, we do not listen to just one person," Kirk says.

In addition to the auditory component, the materials will be presented in a visual format so listeners can see and hear the phrase.

"This is really important because hearing-impaired people often have great difficulty understanding speech if they are just listening. Seeing the face and following lip reading cues can help someone understand the intended message," she said.

Participants will be tested in auditory-only, visual-only or auditory plus visual modalities. At the end of the project, DVDs containing the test, as well as instruction booklets, data-gathering forms and a manual for data interpretation, will be available to professionals.

Another benefit from this study will be the raw data generated.

"Just collecting information from 1,000 individuals and measuring how well they perform on these tests gives us tremendous information that is not available elsewhere," Kirk said.

Kirk is a speech-language pathologist who earned her doctorate degree in hearing sciences. She worked with children in California schools before she joined the nation’s first pediatric cochlear implant team in 1981 at the House Ear Institute in Los Angeles. She also is collaborating with Brian French, co-director of the Purdue Psychometric Instruction/Investigation Laboratory in the College of Education. French is providing expertise in test construction and evaluation for this project.

Other members of the research team are Laurie Eisenberg, a scientist at the House Ear Institute Children’s Auditory and Research Evaluation Center; David Pisoni, Chancellor’s Professor of Psychology and director of the Speech Research Lab at Indiana University; Arthur Boothroyd, a Distinguished Visiting Hearing Scientist at the House Ear Institute; and Dr. Nancy Young, head of the section of otology and neurotology, Division of Pediatric Otolaryngology at Children’s Memorial Hospital in Chicago. Recruitment for participants will begin in 2008 at these various sites.

Source: Purdue University