Exploring the fascinating links between cognition and hearing healthcare

- We Hear with Our Brains!

By Douglas L. Beck, AuD (moderator/guest-editor) - Cognitive Load and Amplification

By Brent Edwards, PhD - Higher-level Processing and Aided Speech-Understanding in Older Adults

By Larry E. Humes, PhD - The Cognitive Part of Speech Recognition

By Ulrike Lemke, PhD - Memory Systems and Hearing Aid Use

By Thomas Lunner, PhD - Hearing Loss and Dementia

By Frank R. Lin, MD, PhD - Fast and Easy: Can Hearing Aids Accelerate Listening and Speech Understanding?

By M. Kathleen Pichora-Fuller, PhD

We Hear With Our Brains!

Although we definitely lighten the load and help patients cope with hearing loss, it’s the brain that must do the heavy lifting.

As “moderator” for the following series of short articles, it’s my distinct pleasure to introduce my distinguished colleagues and the general topic and scope of the discussions below.

As hearing professionals working with and counseling patients, our discussions traditionally revolve around “hearing.” Of course, that makes intuitive sense and seems like a reasonable and instructive starting point. However, as we offered during our two consecutive Featured Sessions1 at the 2012 AudiologyNow, there’s much more for us to consider beyond simply “hearing.”2

Hearing results from the physical, mechanical, and bio-electric transfer of acoustic energy from the outer ear to the temporal lobes. Hearing is a “bottom-up” sensory event, which all animals, mammals, reptiles (and more) share. However—and of significant importance—what separates humans from all other beings is not our hearing (which is actually quite limited as compared to dogs, cats, whales, dolphins, and many others). Our vast ability to listen—to apply meaning to complex sound structures such as language and music (“top-down” processing)—is what distinguishes us from all other beings and places us firmly atop the food chain.3

The difference between hearing and listening is analogous to the difference between sound and thought. We can accurately describe hearing with simple maps (ie, audiograms) of the detection of input signals defined acoustically in terms of relative loudness in decibels and spectral content expressed in Hertz. Unfortunately, there’s no easy map of listening or cognitive ability.

Further, given nearly identical hearing loss across multiple patients, the primary difference in their speech perception and listening comprehension abilities (in quiet and noise) is based on the overall accuracy of their auditory nervous system (ie, the ability to deliver accurate, non-distorted information to the auditory cortex) and, importantly, the individual’s cognitive ability to make sense of the delivered auditory information.4 That is, the same loudness, spectral, and spatial cues delivered to multiple patients (with essentially the same type and degree of sensorineural hearing loss measured by the audiogram) will likely be perceived differently by each patient—primarily based on their cognitive abilities.2 Importantly, recent research indicates that the people with the highest cognitive abilities are generally more efficient and more accurate in extracting meaning from supra-threshold acoustic stimuli, and this is even more apparent in challenging acoustic environments (ie, speech in noise).

Therefore, as hearing professionals tasked (essentially) with maximizing human hearing, we must consider the ultimate speech processor: the human brain. The brain influences and interacts with hearing via cognitive (and other) abilities to facilitate listening. Specifically, “Listening Is Where Hearing Meets Brain.”3-5

As we did from the podium at the 2012 AudiologyNow conference, I have asked my learned colleagues to create short written essays reflecting their key points and “summary statements” based on their 2012 AudiologyNow Featured Session presentation.

Cognitive Load and Amplification

Our speech-in-noise testing may be telling only part of the story; cognitive load appears to play a major role in patients’ success with hearing aids.

Evidence is increasing that hearing loss, by providing an altered auditory signal to the cognitive system, can result in a cascade of cognitive and psycho-social declines: increased cognitive load, increased mental fatigue, poorer memory, poorer auditory scene analysis, difficulty with attention focusing, poorer mental health, social withdrawal, and depression. Since hearing loss negatively affects cognitive ability, hearing aids may improve such ability by improving the quality of the peripheral auditory signal reaching the cognitive system. Recent experiments have looked at several potential effects of hearing aid technology on cognitive function.

Using a dual-attention task, Sarampalis et al6 demonstrated that improving the speech-to-noise ratio through directional technology or through noise reduction can reduce cognitive load. The latter is particularly interesting because the cognitive load improved without a concurrent improvement to speech understanding. Dawes et al7 found that 12 weeks of acclimatization to hearing aids by new users resulted in both a reduction in reaction time in a dual-attention task and an improvement to the SSQ effort subscale—both indicators that cognitive load reduced over time.

The importance of reduced cognitive load is the implication that mental fatigue (from listening to speech in a difficult environment) also will be reduced. This was demonstrated by Hornsby8 who used a combined speech and vigilance task to show that hearing-impaired patients’ fatigue increased over the course of the task if they were unaided but that no fatigue increase was measured when the same subjects wore hearing aids.

To measure many of these effects of the auditory periphery on cognitive function, new experimental methods are necessary. Traditional speech-in-noise tests do not tap the complex functioning of the cognitive system in a way that is sensitive to the effects that hearing loss and hearing aids can have on cognitive ability.

To this end, we have developed two tests of speech understanding in complex environments that go beyond audibility effects. The first is a measure of auditory attention switching ability,9 and the second is a measure of semantic information received from simultaneous conversation streams arriving at the listener from different locations.10 These tests should allow for better measures of the impact of hearing loss and hearing aid technology on complex auditory processing, which stresses the cognitive system. Through these and other new experimental paradigms, we will discover benefits of hearing aid technology on cognitive ability that go beyond the measures of audibility and speech intelligibility.

Key Points

- Hearing aids can ameliorate the negative effects of hearing loss on cognitive function by improving the quality of the auditory signal reaching the auditory cortex.

- New outcome measures are being developed that engage the cognitive system more than traditional speech tests. These measures should allow for greater sensitivity in quantifying the effect of hearing aid technology on cognitive ability.

Higher-Level Processing and Aided Speech-Understanding in Older Adults

Older adults’ performance does not match younger adults’ performance—even if the hearing aid fitting is “perfect.”

Using a site-of-lesion framework, we have often classified the factors underlying speech-understanding problems of older adults as peripheral, central-auditory, or cognitive, including combinations of these.11-13 The development with advancing age of a high-frequency sensorineural hearing loss, a key peripheral factor, has been documented for many decades.14 Approximately 30% to 40% of Americans over the age of 65 years have a significant hearing loss that likely impacts their ability to understand speech.15

Research has repeatedly confirmed the primary importance of the peripheral hearing loss as a factor underlying the unaided speech-understanding difficulties of older adults.16 Secondary factors contributing to the unaided performance of older adults have often been identified and typically have been one of several cognitive factors.17 Whereas hearing loss often accounts for 40% to 70% of the variance in unaided speech-understanding performance among older adults, cognitive factors typically account for much less (10% to 15%).

When the high-frequency sensorineural hearing loss in older adults increases, either across a group of such listeners or within an individual, two effects are likely:

- The audibility of important high-frequency speech components decreases;

- The underlying cochlear pathology increases.

Whereas the former effect is fairly easily addressed via conventional amplification for the majority of older adults, amplification cannot undo or repair the underlying cochlear pathology. As a result, it is important to determine which effect underlies the strong dependence of unaided speech recognition on peripheral hearing loss. In a series of experiments using spectrally shaped speech that closely approximates well-fit hearing aids, we demonstrated that it is primarily the inaudibility effect that is responsible for the correlation between peripheral hearing loss and unaided speech understanding.18,19 Basically, when the speech signal is spectrally shaped to mimic well-fit hearing aids, the amount of high-frequency hearing loss no longer explains individual differences in speech understanding.

Importantly, however, even when the speech has been carefully spectrally shaped to ensure audibility over a wide bandwidth (out to at least 4000-5000 Hz), older adults listening under such conditions still do not perform as well as young adults under the same listening conditions. This observation is especially true for listening conditions in which speech or speech-like competition is involved.16 Under these conditions, aided listening with competing speech or speech-like stimuli, cognitive measures emerge as a primary predictor, accounting for 15% to 50% of the variance in aided speech-understanding performance.

To date, however, research has not converged on the key cognitive ability or abilities that are most relevant for aided speech understanding in older adults.17 Candidate cognitive measures identified to date include measures of working memory, processing speed, and attention/inhibition.

Key Points

- A starting point for remediating the speech-understanding problems of older adults is the fit and verification of amplification that restores the audibility of speech through at least 4000-5000 Hz.

- Once well-fit with amplification, the aided speech-understanding performance of older adults in many everyday listening situations involving competing speech will be largely determined by higher-level processes, especially cognitive function. It will be increasingly important to identify this potential limitation to hearing-aid benefit and to tailor remediation accordingly.

The Cognitive Part of Speech Recognition

Hearing aids provide the “bottom-up” information that enables cognition.

Traditional models of speech processing in the brain have focused on two speech centers in the left hemisphere—Wernicke’s and Broca’s area. However, today’s neurophysiologic models define a more extended network involved in speech processing. Specifically, the superior temporal gyrus (STG, which includes Wernicke’s area and the primary auditory cortex), the middle temporal gyrus (MTG), and the inferior frontal gyrus (IFG, including Broca’s area, see Friederici20).

New technologies have been essential in providing evidence for long-range structural connections between multiple brain areas. Diffusion tensor imaging tractography has identified two major pathways (the dorsal and ventral streams), which connect language relevant regions of the temporal and frontal cortex.21 In the left hemisphere, connections linking STG and the frontal lobe were identified, and left and right hemisphere connections linking regions below the superior temporal sulcus with the frontal lobe were recognized.

The dorsal stream appears to link sound to action by mapping phonological representations onto articulatory motor representations. The ventral stream may link sound to meaning by mapping phonological representations onto lexical conceptual representations.20,22 A recent meta-analysis of functional magnetic resonance imaging (fMRI) results for phoneme and word recognition supports a hierarchical recognition of temporally complex speech sounds along the auditory ventral stream.23

Nonetheless, both hemispheres are needed for successful speech recognition. It has been demonstrated that the right hemisphere contributes to speech comprehension, notably in pre-attentive spectro-temporal feature processing24,25 and suprasegmental processing including prosody, intonation, and speech rhythm.26,27 Lexical-semantic processing mainly activates the left hemisphere, whereas phonemic tasks involve both hemispheres, and prosodic information processing is lateralized to the right.21

ONLINE EXTRA

See Vishakha Rawool’s thee-part article on the aging auditory system (July-Sept 2007 HR)

Right frontal activation seems not specific to the language component involved28 and appears related to the recruitment of additional executive processes, such as selective attention and verbal working memory. This agrees with the fact that the frontal cortex steers our attention through motivation, expectation, and executive control. Therefore, there is evidence for bilateral acoustic-phonetic processing early on, followed by specialization of hemispheres for processing of syntactic structure, grammatical and semantic relations to the left; and for prosody, intonation and speech rhythm to the right. For final interpretation and recognition, both information streams are integrated again and mapped onto semantic knowledge in memory within a few milliseconds.

Neuroscience indicates successful speech comprehension is a very complex process, because the final interpretation of information, meaning, and intent is based on semantic knowledge and socio-emotional experience, and is relying and drawing on other higher-order cognitive functions across the brain.

In addition to basic hearing abilities, listening skills are required. Listening is referred to as hearing with intention and attention for purposeful activities (including processes of motivation, planning, expectation), demanding the expenditure of mental effort—including short-term and working memory for holding and manipulating information, allocation of attention, and executive control processes (see ICF Consensus Statement29).

As speech is generally presented at 140 to 180 words-per-minute in ordinary conversation, comprehension draws on the cognitive capabilities of speed-of-information processing, selective attention, and verbal working memory. Although significant differences exist between individuals, age-related declines are generally observed for these same abilities. Shared variance between sensory and cognitive age-related changes is higher in old age than in young age.30 While a two-way relationship of sensory and cognitive age-related changes is probable, it is not known to what extent central slowing causes inefficient signal processing, or to what extent inefficient processing slows the system.

Interestingly, with aging, some cognitive abilities also improve as older people usually have more experience with a wider variety of social situations and broader semantic knowledge, and they make better use of listening strategies, context, and prosodic information. These abilities can serve as compensating resources for successful speech recognition and communication.

Naturally, having normal (or near-normal and/or corrected) hearing is a pre-condition for successful speech recognition. Obviously, the better the quality of the incoming acoustic signals to the auditory system, the better the chances for successful speech recognition. Adult-onset hearing loss impacts more than 27% of men and 24% of women ages 45 and over—and the prevalence rates increase with age.31This is where hearing technology can be of great help. Hearing provides the sensory (ie, bottom-up) information that enables cognition. Normal (or near-normal and/or corrected) hearing is, therefore, a prerequisite to participate in engaging social interaction, communication, and thus supports and improves cognition. These same hearing sensations facilitate orientation, support a sense of security, and free cognitive resources for other meaningful activities in daily life.

Key Points

- The better the quality of incoming signals to the central auditory system, the better the chances for successful speech recognition.

- However, speech comprehension is a complex process relying on sensory hearing abilities, as well as on higher-order cognitive functions that are organized across the brain.

- Age-related decline in speed-of-information processing, working memory capacity, or selective attention may challenge successful speech recognition. Improved abilities with age include social experience, semantic knowledge, use of listening strategies, context, and prosodic information. These can serve as compensating resources for successful speech comprehension and communication in old age.

Memory Systems and Hearing Aid Use

Testing for cognitive function will eventually become an essential part of hearing aid selection and fitting.

Cognitive Hearing Science (also referred to as Auditory Cognitive Science) is an emerging field of interdisciplinary research concerning the interactions between hearing and cognition. Cognitive Hearing Science presents new opportunities to use complex digital signal processing to design technologies to perform in challenging everyday acoustic environments. Further, Cognitive Hearing Science considers the increasing social imperative to help people whose communication problems span hearing and cognition.32 Hearing aids use complex signal processing intended to improve speech recognition. However, such processing may alter the signal in ways that impede or cancel the intended benefits for some individuals.33 Additionally, signal degradation may originate from external sources (eg, environmental noise), as well as internal sources (ie, the cochlea). Regardless of origin, degradation increases “cognitive load” and creates a subsequent need for allocating limited cognitive processing resources to the recovery of degraded information at the auditory periphery. This, in turn, reduces the resources available for the successful processing and identification of the linguistic content.

Working memory refers to the limited capacity of an individual to hold and manipulate a set of items (ie, numbers, words, colors, etc) in the mind. Complex working memory capacity can be assessed using multiple tools, such as the Reading Span Test (RST).34,35 The RST measures the simultaneous processing and memory aspects of working memory. Working memory is highly involved in communication under complex and challenging acoustic conditions, particularly for hearing-impaired people when their auditory input is degraded.

For example, when conversing in a noisy background, people need to store information in their working memory to decipher subsequent information while keeping track of and filling in missing information and while ignoring irrelevant information.36

Three studies show the importance of assessing individual working memory capacity to individualize and improve hearing aid signal processing. The first study assessed the importance of working memory in varying background noise levels (unpublished data, 2012; Bramslow L, Eneroth K, Lunner T). Two groups with low or high RST capacities were assessed using Oticon Agil hearing aids in a speech-in -noise test (Dantale2) with two competing talkers. The results showed that the cognitively high-performing group had approximately 20% better scores than the cognitively low-performing group at a number of signal-to-noise ratios. This result corroborates Lunner37 and suggests that working memory capacity is important for the ability to perform in competing-talker situations. This also may suggest individualization as to when directional microphones and noise reduction schemes should be used.33

ONLINE EXTRA

See Jerker Rönnberg’s article “Working Memory for Speechreading and Poorly Specified Linguistic Input: Applications to Sensory Aids” (May 2003 HR).

The second and third studies investigated the influence of hearing aid signal processing distortions while assessing speech in noise. Lunner and Sundewall-Thorén38 showed the benefit of a fast-acting wide dynamic range compression (WDRC) depended on the working memory capacity of the individual. Indeed, people with high working memory capacity benefited most from fast-acting compression. Those with low working memory capacity benefited most from slow-acting compression and were at a disadvantage using fast-acting compression.

Souza39 showed that elderly individuals with low working memory capacity were more susceptible to frequency compression processing. Thus, fast-acting WDRC and frequency compression can be considered to produce hearing aid signal processing distortions that negatively affect elderly persons with low working memory. Therefore, it would be beneficial to individualize hearing aid signal processing based on cognitive abilities (in particular working memory capacity), and there is a need for clinical procedures to assess cognitive functioning.

Key Points

- Hearing aid signal processing can affect cognitive function and speech comprehension in positive and negative ways. It appears beneficial to make individualized hearing aid signal processing “less aggressive” while using WDRC and frequency compression for older people (and others) with low working memory capacity. Further, the use of directional microphones and noise reduction schemes should likely be tailored to suit the cognitive abilities of the individual.

- There is a need for clinical cognitively based procedures that can determine the settings of hearing aid parameters based on cognitive abilities.

Hearing Loss and Dementia

A closer look at the “use it or lose it” theory.

An association between age-related hearing loss (ARHL) and dementia was described in a seminal retrospective case-control study published in 1989 in the Journal of the American Medical Association.40 Remarkably, over the ensuing two decades, there has been little research effort exploring the basis of this association despite dementia being one of the most important public health issues facing our society.

Current projections estimate that the prevalence of dementia will continue to double every 20 years, such that 1 in every 30 Americans will have prevalent dementia by 2050.41 At the present time, there is not one single established intervention or pharmacologic therapy that could potentially even help delay the onset of dementia.42

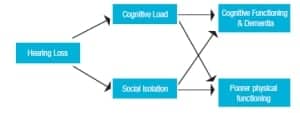

A conceptual model through which ARHL could be mechanistically associated with cognitive decline and dementia is presented in Figure 1. In this model, two non-mutually exclusive pathways of increased cognitive load43 and loss of social engagement44-46 mediate effects of ARHL on cognitive functioning.47Epidemiologic studies performed over the last 2 years have begun to explore whether ARHL is independently associated with cognitive functioning and incident dementia consistent with this model. Using cross-sectional data from both the National Health and Nutritional Examination Surveys48 and the Baltimore Longitudinal Study of Aging (BLSA),49 we have demonstrated that greater hearing loss is associated with poorer cognitive functioning on non-verbal tests of memory and executive function among older adults. In both of these studies, a 25 dB shift in the speech-frequency puretone average was equivalent to nearly 7 years of aging on cognitive scores in older adults.

Longitudinal cognitive data from the Health Aging and Body Composition Study demonstrated similar findings.50 Compared to those with normal hearing, individuals with hearing loss had accelerated rates of decline on non-verbal cognitive tests. Finally, analysis of longitudinal data from a cohort of older adults in the BLSA has demonstrated that, compared to those individuals with normal hearing, those with a mild, moderate, and severe hearing loss had a 2, 3, and 5-fold increased risk of incident dementia, respectively.47 In all these studies, hearing aid use was not significantly associated with attenuated rates of cognitive decline and risk of dementia, but data on other key variables (eg, years of hearing aid use, adequacy of hearing aid fitting and rehabilitation, etc) that would affect the success of hearing loss treatment and affect any observed association were not available. Consequently, whether hearing rehabilitative strategies could affect cognitive decline remains unknown—and will likely never be determined from observational epidemiologic studies.

Figure 1. Conceptual model of hearing loss with cognitive and physical functioning in older adults.

Hearing loss is highly prevalent in older adults with nearly two-thirds of older adults 70 years and older having clinically significant hearing loss, but with less than 15% receiving any form of rehabilitative treatment.50 Epidemiologic research demonstrating that ARHL is independently associated with cognitive functioning and dementia is intriguing because the hypothesized mechanistic pathways underlying these associations (Figure 1) may be amenable to hearing rehabilitative therapies. A multi-site randomized controlled trial of hearing rehabilitative treatment currently being planned will help elucidate whether effective hearing rehabilitative treatment could mitigate cognitive decline and help delay the onset of dementia in older adults (submitted paper, Lin FR, Yaffe K, Xia J, et al: Hearing loss and cognitive decline among older adults).

Key Points

- Greater hearing loss in older adults is independently associated with poorer cognitive functioning in domains of memory and executive function, accelerated cognitive decline, and the risk of incident dementia.

- Whether hearing rehabilitative therapies could help mitigate cognitive decline and delay dementia in older adults remains unknown.

Fast and Easy: Can Hearing Aids Accelerate Listening and Speech Understanding

For older listeners, signal quality and semantic context are critical.

As adults age, cognitive information processing slows and auditory temporal processing is disrupted, with the combined result being that listening can become “sluggish.” In general, the easier it is to process information, the faster a listener can respond to it. Ease of listening could be improved if the signal quality is improved (possibly by hearing aids) and/or if listeners are trained to use expectations and knowledge to anticipate what the incoming sound is likely to be.

Using lexical decision reaction time as a speed measure assumed to reflect the ease of listening to and understanding speech, a set of experimental findings for younger and older listeners with good audiograms illustrate how both signal clarity and priming by context could be harnessed to accelerate listening (submitted paper, Goy et al). In the experiments, the time to decide if a sentence-final target presented in quiet was a word or not was measured. The initial portion of the sentence provided congruent, neutral, or incongruent semantic context for the target word, and this context was presented either intact, low-pass filtered, time-compressed, or in competing babble. For younger adults, congruent context facilitated lexical decision by about 250 ms when there was no distortion and facilitation was reduced as more distortion was applied regardless of the type of degraded. Older adults were always slower to respond than younger adults, but their lexical decisions were facilitated even more (300 ms) than those of younger adults when the context was congruent compared to when it was neutral or incongruent. Like the younger adults, the responses of older adults were slowed when the context was acoustically degraded, but time-compression had a more deleterious effect on their performance than on younger adults.

These findings demonstrate that listening could be made easier and faster by ensuring that signal quality is clear (improving bottom-up processing) and that listeners learn to use congruent semantic context and expectations to guide listening (improving top-down processing via the knowledge/expertise of older adults).

It seems that this paradigm could readily be adapted to demonstrate the advantages of signal processing by hearing aids. Although lexical decision provides a measure of speed of information processing during listening, other methods such as eye-tracking could uncover exactly how the listener processes speech as it unfolds. Additional research using eye-tracking has confirmed that, even when words are correctly identified, lexical processing is slower when words are presented in quiet compared to when they are presented in noise and that there is specific age-related slowing only in some conditions such as when the phonemes differentiating competing word candidates rely on hearing the initial consonant.51 Overall, measures of speed of processing promise to provide a useful new tool for measuring listening performance and the benefit from either signal processing options and/or training in the use of context.

Key Points

- New measures based on speed of processing could potentially be used in the clinic to evaluate how ease of listening changes depending on signal-based (bottom up) factors or knowledge-based (top-down) factors.

- Measures of speed are more sensitive and can provide more specific insights into “online” processing during listening than “off-line” measures of speech recognition accuracy because there are multiple ways that listeners could achieve accurate word recognition.

Final Thoughts by Dr Beck

As a profession and an industry, we’re really only beginning to understand the interaction and co-dependence of cognition and audition. It seems obvious we’d all like to know how to best apply these (and many other) ideas and concepts regarding hearing, listening, and cognition to our daily clinical interactions with patients—but we’re not there yet.

It is our obligation to proceed cautiously as we continue to unravel and better understand the science that underpins the pivotal and important connections between hearing, listening, cognition, and their clinical applicability.

References

- Beck D, Pichora-Fuller K, Edwards B, Lemke U, Humes L, Lunner T, Lin F. Issues in cognition, audition, and amplification. Presented at: AudiologyNOW! 2012; March 30, 2012; Boston.

- Beck DL, Clark JL. Audition matters more as cognition declines & cognition matters more as audition declines. Audiol Today. March/April 2009.

- Beck DL, Flexer C. Listening is where hearing meets brain in children and adults. Hearing Review. 2011;18(2):30-35. Available at: www.hearingreview.com/issues/articles/2011-02_02.asp

- Beck DL, Flexer C. Hearing is the foundation of listening and listening is the foundation of learning. In: Smaldino J, Flexer C, eds. The Handbook of Acoustic Accessibility. New York City: Thieme Publishers; 2012:9-17.

- Beck DL. Exploring the maze of the cogntion-audition connection. Hear Jour. 2011;64(10):21-24.

- Sarampalis A, Kalluri S, Edwards B, Hafter E. Objective measures of listening effort: effects of background noise and noise reduction. J Speech Lang Hear Res. 2099;52:1230-1240.

- Dawes P, Munro K, Kalluri S, Edwards B. Listening effort and acclimatization to hearing aids. Paper presented at: International Conference on Cognition and Hearing; June 20-23,2011; Linkoping, Sweden.

- Hornsby B. Effect of hearing aid use on mental fatigue. Presented at: American Auditory Society Meeting; March 3-5, 2011; Scottsdale, Ariz.

- Woods W, Kalluri S. Cognitive and energetic factors in complex-scenario listening. International Conference on Cognition and Hearing; June 20-23, 2011; Linkoping, Sweden.

- Hafter E, Xia J, Kalluri S. Visual shadowing of information in ongoing speech; a new look at the cocktail party. Presented at: 35rd Association for Research in Otolaryngology Mid-Winter Meeting; February 25-29, 2012; San Diego.

- Committee on Hearing and Bioacoustics and Biomechanics (CHABA). J Acoust Soc Am. 1988;83:859-895.

- Humes LE. Speech understanding in the elderly. J Am Acad Audiol. 1996;7:161-167.

- Humes LE, Dubno JR, Gordon-Salant S, Lister JJ, Cacace AT, Cruickshanks KJ, Gates GA, Wilson RH, Wingfield A. Report from the Academy Task Force on Central Presbycusis. J Am Acad Audiol. In press.

- Schacht J, Hawkins JE Jr. Sketches of Otohistory. Part 9: Presby(a)cusis. Audiol Neurotol. 2005;10:243-247.

- Cruickshanks KJ. Epidemiology of age-related hearing impairment. In: Gordon-Salant S, Frisina RD, Popper AN, Fay RR, eds. The Aging Auditory System. New York City: Springer; 2010:259-274.

- Humes LE, Dubno JR. Factors affecting speech understanding in older adults. In: Gordon-Salant S, Frisina RD, Popper AN, Fay RR, eds. The Aging Auditory System. New York City: Springer; 2010:211-258.

- Akeroyd M. Are individual differences in speech reception related to individual differences in cognitive ability? A survey of twenty experimental studies with normal and hearing-impaired adults. Int J Audiol. 2008;47(Suppl 2):S53-71.

- Humes LE. The contributions of audibility and cognitive factors to the benefit provided by amplified speech to older adults. J Am Acad Audiol. 2007;18:590-603.

- Humes LE, Kewley-Port D, Fogerty D, Kinney D. Measures of hearing threshold and temporal processing across the adult lifespan. Hearing Res. 2010;264:30-40.

- Friederici AD. The brain basis of language processing: from structure to function. Physiol Rev. 2011;91:1357-92.

- Glasser MF, Rilling JK. DTI tractography of the human brain’s language pathways. Cerebral Cortex. 2008;18:2471-82.

- Hickok G, Poeppel D. The cortical organization of speech processing. Nat Rev Neurosci. 2007;8:393-402.

- DeWitt I, Rauschecker JP. Phoneme and word recognition in the auditory ventral stream. Proc Nat Acad Sci USA. 2012;109(8):E505-14.

- Poeppel D. The analysis of speech in different temporal integration windows: cerebral lateralization as asymmetric sampling in time. Speech Commun. 2003;41:245–55.

- Zaehle T, Jancke L, Herrmann CS, Meyer M. Pre-attentive spectro-temporal feature processing in the human auditory system. Brain Topography. 2009;22( 2):97-108.

- Meyer M, Steinhauer K, Alter K, Friederici AD, von Cramon DY. Brain activity varies with modulation of dynamic pitch variance in sentence melody. Brain Lang. 2004;89:277-289.

- Geiser E, Zaehle T, Jancke L, Meyer M. The neural correlate of speech rhythm as evidenced by metrical speech processing. J Cognitive Neurosci. 2008;20:541-552.

- Vigneau M, Beaucousin V, Hervé PY, et al. What is right-hemisphere contribution to phonological, lexico-semantic, and sentence processing? Insights from a meta-analysis. Neuroimage. 2011;54(1):577-93.

- World Health Organization (WHO). International classification of functioning, disability and health, ICF. Geneva: WHO; 2001.

- Baltes PB, Lindenberger U. Emergence of a powerful connection between sensory and cognitive functions across the adult life span: a new window to the study of cognitive aging? Psychol Aging. 1997;12(1):12-21.

- Lopez AD, Mathers CD, Ezzati M, Jamison DT, Murray CJL. Global Burden of Disease and Risk Factors. Washington, DC: World Bank; 2006.

- Arlinger S, Lunner T, Lyxell B, Pichora-Fuller MK. The emergence of cognitive hearing science. Scand J Psychol. 2009;50:371–384.

- Lunner T, Rudner M, Rönnberg J. Cognition and hearing aids. Scand J Psychol. 2009;50:395–403.

- Daneman M, Carpenter P. Individual differences in working memory and reading. J Verbal Learning Verbal Behav. 1980;19:450-466.

- Rönnberg J, Arlinger S, Lyxell B, Kinnefors C. Visual evoked potentials: relation to adult speechreading and cognitive function. J Speech Hear Res. 1989;32:725-735.

- Pichora-Fuller MK. Audition and cognition: what audiologists need to know about listening. In: Palmer C, Seewald R, eds. Hearing Care for Adults. Stäfa, Switzerland: Phonak; 2007:71-85.

- Lunner T. Cognitive function in relation to hearing aid use. Int J Audiol. 2003;42:S49-S58.

- Lunner T, Sundewall-Thorén E. Interactions between cognition, compression, and listening conditions: effects on speech-in-noise performance in a two-channel hearing aid. J Am Acad Audiol. 2007;18:604-617.

- Souza PE. Incorporating cognitive test into hearing aids decisions. Handout presented from course, April 11, 2012. Available at: www.audiologyonline.com/ceus//recordedcoursedetails.asp?class_id=20309

- Uhlmann RF, Larson EB, Rees TS, Koepsell TD, Duckert LG. Relationship of hearing impairment to dementia and cognitive dysfunction in older adults. JAMA. 1989;261(13):1916-1919.

- Alzheimer’s disease facts and figures. Alzheimers Dement. 2010;6(2):158-194.

- Daviglus ML, Bell CC, Berrettini W, et al. National Institutes of Health State-of-the-Science Conference statement: Preventing alzheimer disease and cognitive decline. Ann Intern Med. 2010;153(3):176-218.

- Wingfield A, Tun PA, McCoy SL. Hearing loss in older adulthood—what it is and how it interacts with cognitive performance. Current Directions in Psychological Science. 2005;14(3):144-148.

- Fratiglioni L, Wang HX, Ericsson K, Maytan M, Winblad B. Influence of social network on occurrence of dementia: a community-based longitudinal study. Lancet. 2000;355(9212):1315-1319.

- Barnes LL, Mendes de Leon CF, Wilson RS, Bienias JL, Evans DA. Social resources and cognitive decline in a population of older African Americans and whites. Neurology. 2004;63(12):2322-2326.

- Bennett DA, Schneider JA, Tang Y, Arnold SE, Wilson RS. The effect of social networks on the relation between Alzheimer’s disease pathology and level of cognitive function in old people: a longitudinal cohort study. Lancet Neurol. 2006;5(5):406-412.

- Lin FR, Metter EJ, O’Brien RJ, Resnick SM, Zonderman AB, Ferrucci L. Hearing loss and incident dementia. Arch Neurol. 2011;68(2):214-220.

- Lin FR. Hearing loss and cognition among older adults in the United States. J Gerontol A Biol Sci Med Sci. 2011;66(10):1131-1136.

- Lin FR, Ferrucci L, Metter EJ, An Y, Zonderman AB, Resnick SM. Hearing loss and cognition in the Baltimore Longitudinal Study of Aging. Neuropsychology. 2011;25(6):763-770.

- Chien W, Lin FR. Prevalence of hearing aid use among older adults in the United States. Arch Intern Med. 2012;172(3):292-293.

- Ben-David BM, Chambers C, Daneman M, Pichora-Fuller MK, Reingold E, Schneider BA. Effects of aging and noise on real-time spoken word recognition: evidence from eye movements. J Speech Lang Hear Res. 2011;54:43-262. Published online August 5, 2010: doi:10.1044/1092-4388(2010/09-0233)

In Beck:

In Edwards:

In Humes:

In Lemke:

In Lunner:

In Lin:

In Pichora-Fuller:

Citation for this article:

Beck DL and Edwards B, Humes LE, Lemke U, Lunner T, Lin FR, Pichora-Fuller MK. Expert Roundtable: Issues in Audition, Cognition, and Amplification Hearing Review. 2012;19(09):16-26.