Japanese people are less influenced by lip movements, or lipreading, when listening to another speaker, according to recent evidence from a neuroimaging study at Kumamoto University in Japan. Study findings were published in the August 11, 2016 edition of the Nature.com journal Scientific Reports.

Which parts of a person’s face do you look at when you listen to them speak? Lip movements affect the perception of voice information from the ears when listening to someone speak, but native Japanese speakers are mostly unaffected by that part of the face. Recent research from Japan reveals a clear difference in the brain network activation between two groups of people, native English speakers and native Japanese speakers, during face-to-face vocal communication.

“Native English speakers attempt to narrow down candidates for incoming sounds by using information from the lips which start moving a few hundredths of milliseconds before vocalizations begin,” said Kaoru Sekiyama, PhD, who was the lead investigator of the study at Kumamoto University. “Native Japanese speakers, on the other hand, place their emphasis only on hearing, and visual information seems to require extra processing.”

It is known that visual speech information, such as lip movement, affects the perception of voice information from the ears when we are speaking to someone face-to-face. For example, lip movement can help us to hear better in noisy conditions. However, dubbed movie dialogue, where the lip movement of the actors conflicts with the speaker’s voice, gives a listener the illusion of hearing another sound. This illusion is called the “McGurk effect.”

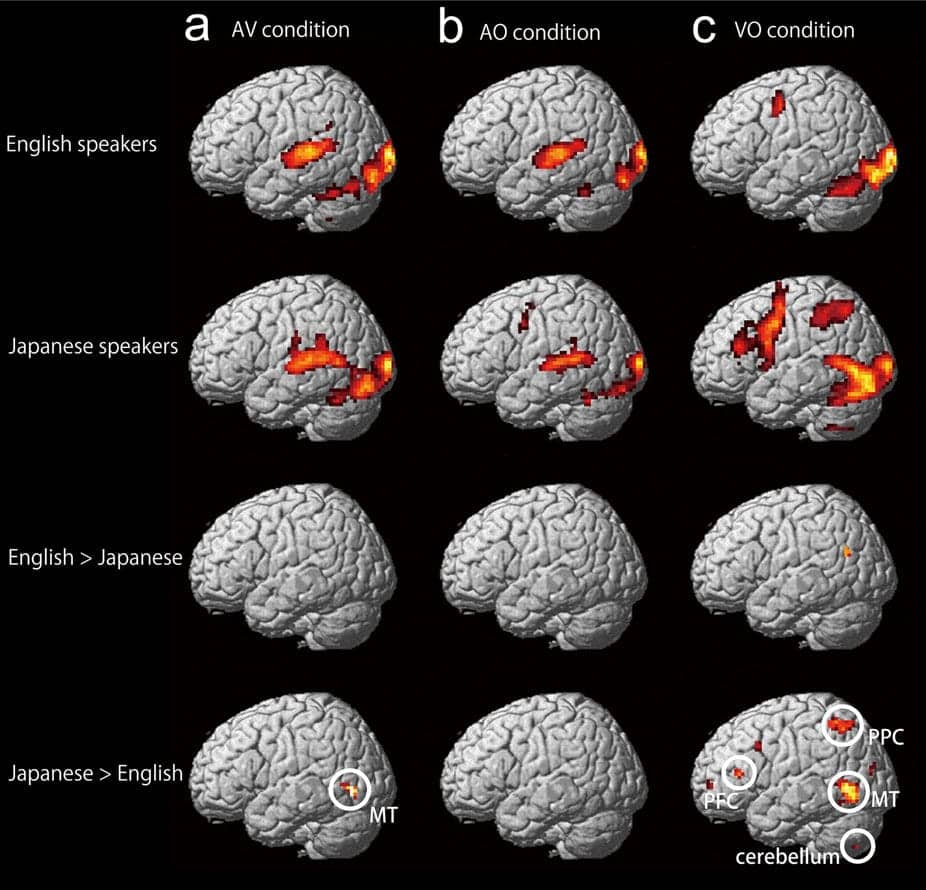

Image shows brain areas activated in native English speakers and native Japanese speakers, and those showing greater activation in native English speakers than Japanese speakers, and vice versa. (Photo credit: Kaoru Sekiyama, PhD)

According to an analysis of previous behavioral studies, native Japanese speakers are not influenced by visual lip movements as much as native English speakers. To examine this phenomenon further, researchers from Kumamoto University measured and analyzed gaze patterns, brain waves, and reaction times for speech identification between two groups of 20 native Japanese speakers and 20 native English speakers.

The difference was clear. When natural speech is paired with lip movement, native English speakers focus their gaze on a speaker’s lips, even before the emergence of any sound. In contrast, the gaze of native Japanese speakers is not as fixed. The researchers also found that native English speakers were able to understand speech faster by combining the audio and visual cues, whereas native Japanese speakers showed delayed speech understanding when lip motion was in view.

After conducting their initial analysis of previous studies, Kumamoto University researchers teamed up with researchers from Sapporo Medical University and Japan’s Advanced Telecommunications Research Institute International (ATR) to measure and analyze brain activation patterns using functional magnetic resonance imaging (fMRI). Their goal was to elucidate differences in brain activity between speakers of the two languages.

They found that functional connectivity in the brain between the area that deals with hearing and the area that deals with visual motion information, and the primary auditory and middle temporal areas, respectively, was stronger in native English speakers than in native Japanese speakers. This finding strongly suggests that auditory and visual information are associated with each other at an early stage of information processing in an English speaker’s brain, but the association is made at a later stage in a Japanese speaker’s brain. The functional connectivity between auditory and visual information, and the manner in which the two types of information are processed together was shown to be clearly different between the two different language speakers.

“It has been said that video materials produce better results when studying a foreign language. However, it has also been reported that video materials do not have a very positive effect for native Japanese speakers,” said Professor Sekiyama. “It may be that there are unique ways in which Japanese people process audio information–as related to what we have shown in our recent research–that are behind this phenomenon.”

Source: Kumamoto University; Scientific Reports, Nature.com

Image credits: Kaoru Sekiyama, PhD; © Dgilder | Dreamstime.com

It is quite obvious that Japanese speakers do not move their lips, and articulate like English speaking people. Hence they would naturally not rely on lip reading as a substitute for hearing loss.