By Michael Miller, Public Information Officer, University of Cincinnati

Echoes received by bats are so simple that a sound file of their calls can be compressed 90% without losing much information, according to a study published in the journal PLOS Computational Biology. An article describing the research appears on the University of Cincinnati (UC) website.

The study demonstrates how bats have evolved to rely on redundancy in their navigational “language” to help them stay oriented in their complex three-dimensional world.

“If you can make decisions with little information, everything becomes simpler. That’s nice because you don’t need a lot of complex neural machinery to process and store that information,” study co-author Dieter Vanderelst said.

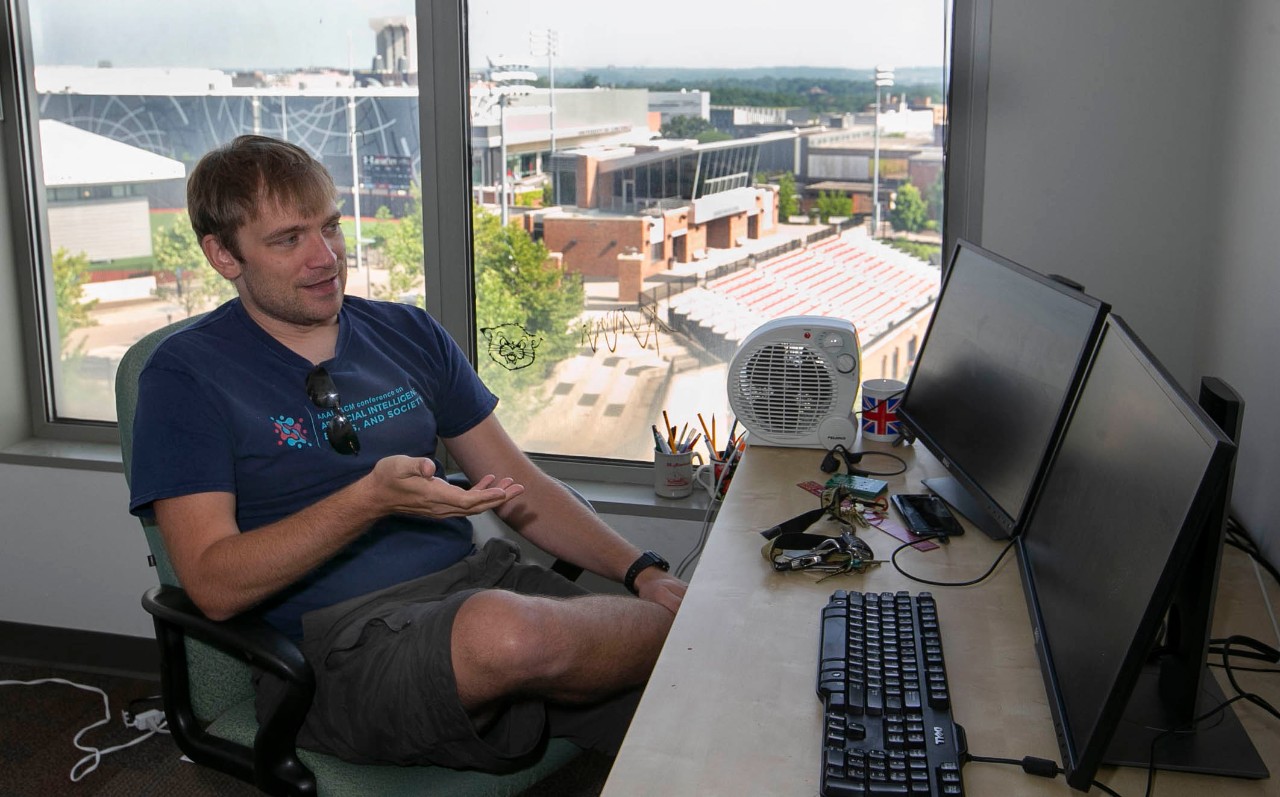

UC assistant professor Dieter Vanderelst draws from the natural world to inspire better engineering. He teaches a Biology Meets Engineering program at UC. Photo/Joseph Fuqua II/UC Creative + Brand

UC researchers suspected that the calls of bats contain redundant information and that bats might use efficient encoding strategies to extract the most relevant information from their echoes. Many natural stimuli encountered by animals have a lot of redundancy. Efficient neural encoding retains essential information while reducing this redundancy.

To test their hypothesis, they built their own “bat on a stick,” a tripod-mounted device that emits a pulse of sound sweeping from 30 to 70 kilohertz, a frequency range used by many bats. By comparison, human speech typically ranges from 125 to 300 hertz (or 0.125 to 0.3 kHz).

More than 1,000 echoes were captured in distinct indoor and outdoor environments such as in a barn, in different-sized rooms, among bushes, and tree branches, and in a garden.

Researchers converted the recorded echoes to a graph of the sound, called a cochleogram. Then they subjected these graphs to 25 filters — essentially compressing the data. They trained a neural network, a computer system modeled on the human brain, to determine if the filtered graphs still contained enough information to complete a number of sonar-based tasks known to be performed by bats.

They found that the neural network correctly identified the location of the echoes even when the cochleogram was stripped of as much as 90% of its data.

“What that tells us is you can compress that data and still do what you need to do. It also means if you’re a bat, you can do this efficiently,” said Vanderelst, an assistant professor in UC’s College of Arts and Sciences and in the College of Engineering and Applied Science.

Grace Smith-Vidaurre, a UC postdoctoral researcher and evolutionary biologist, has studied the behavior of bats. While she was not part of Vanderelst’s study, she said the research team’s findings suggest greater complexity might not always be a better solution.

“Complexity that yields redundancy can be used to create simpler representations of sounds, which may be key for efficient cognitive processing,” Smith-Vidaurre said. “Encoding more or less complexity in vocalizations can represent a tricky tradeoff for animals like bats, which run the risk of losing information if simpler vocalizations are lost against background noise, but also if vocalizations are too complex for an individual listening to process quickly.”

Smith-Vidaurre said researchers might expect to see more or less redundancy in the calls of bats that inhabit different social or physical environments. Some species live in groups of just a few individuals while others live in colonies a million strong.

“As humans, it’s not very straightforward to look at a sound produced by a bat and determine whether the structural complexity we can see is redundant or unique information,” Smith-Vidaurre said. “When we study vocalizations or echoes produced by animals, it’s really important to place the complexity of these sounds in context by asking what the individual listening would actually perceive, as the authors have done here.”

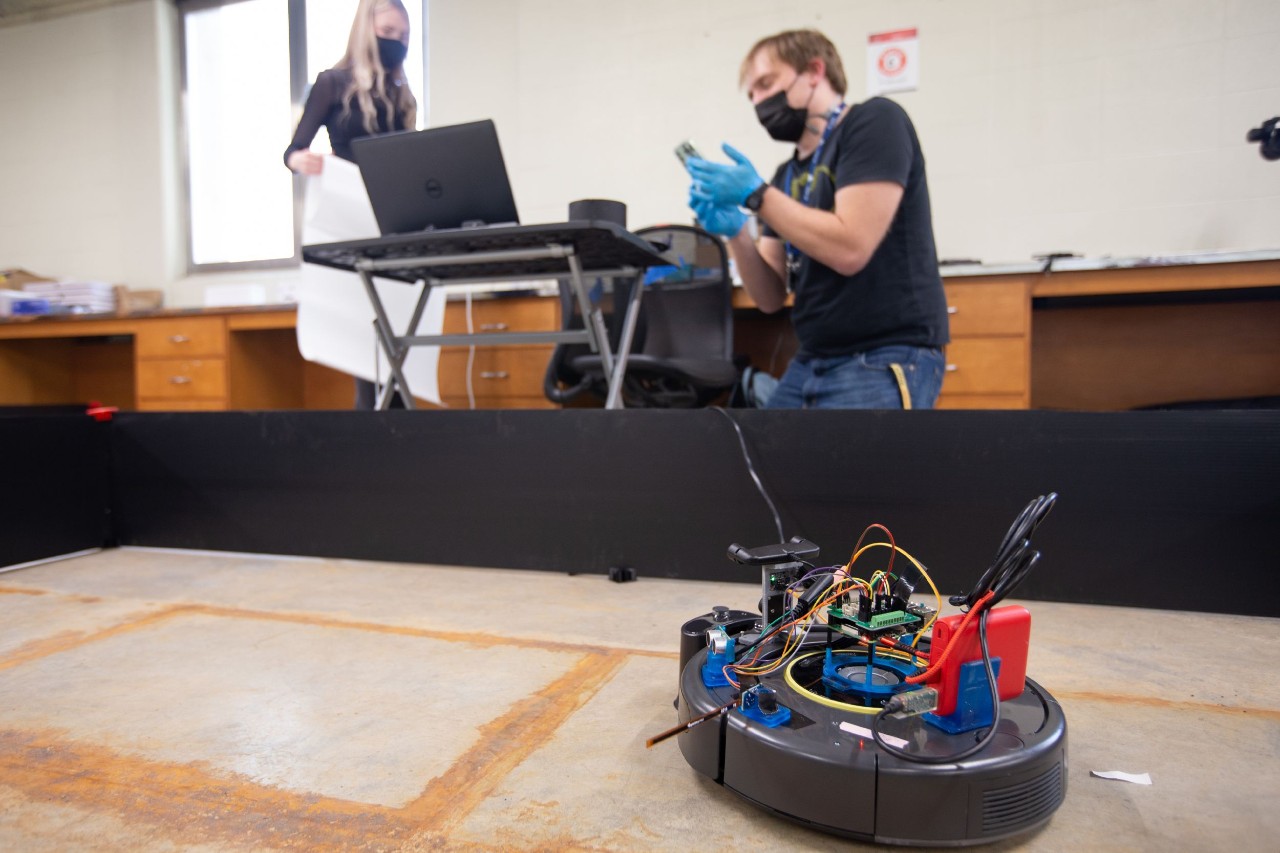

UC assistant professor Dieter Vanderelst teaches a Biology Meets Engineering course at UC that takes cues from nature to make improve the autonomous navigation of robots. Photo/Andrew Higley/UC Creative + Brand

Vanderelst said researchers often can infer what bats are doing just by listening to their calls.

“Even if you don’t see the bat, you can tell with a high degree of certainty what a bat is doing,” he said. “If it calls more frequently, it’s looking for something. If the calls are spread out, it’s cruising or studying something far away.”

Bats produce their ultrasonic calls with a larynx much like ours. But what a voice box. It can contract 200 times a second, making it the fastest known muscle in all mammals.

The nighttime forest can be deafening to people because of its chorus of frogs and drone of insects. But Vanderelst said the ultrasonic frequency by comparison is pretty quiet, allowing bats to hear their own chittering calls that bounce off tree branches and other obstacles during echolocation.

While bats use different chirps for navigating than for communicating with each other, Vanderelst said they’re all pretty simple. But human language has lots of built-in redundancy as well, Vanderelst said.

Fr xmpl, cn y rd ths sntnc wth mssng vwls?

“Take out a lot of letters in a sentence and it’s still readable,” Vanderelst said.

UC graduate Adarsh Chitradurga Achutha, Vanderelst’s student, was the study’s lead author. Co-authors include Vanderelst’s mentor Herbert Peremans at the University of Antwerp, Belgium, and bat expert Uwe Firzlaff with the University of Munich, Germany.

The way bats perceive the world is fascinating both from biological and engineering perspectives, Vanderelst said.

“It’s like a riddle, looking at something that shouldn’t be able to do what it does. So the question is how?” he said. “It’s given me an appreciation for the elegant efficiency underlying this system.”

Original Paper: Achutha AC, Peremans H, Firzlaff U, Vanderelst D. Efficient encoding of spectrotemporal information for bat echolocation. PLOS Computational Biology. 2021;17(6): e1009052.

Source: UC, PLOS Computational Biology

Images: Andrew Higley, UC Creative + Brand, Joseph Fuqua II