Ten small studies about music and hearing aids that can fill important gaps in our knowledge and be done for fun and profit.

By Marshall Chasin, AuD

While our knowledge base about fitting hearing aids with music as an input has grown significantly in recent years, there are many areas where small capstone-caliber studies can significantly contribute to our clinical knowledge. Some of these studies fill in important gaps in our knowledge base, and others verify “assumptions” that we make that may or may not be valid. Many of these are addressed in my book Music and Hearing Aids.

Study 1: Commonly asked clinical questions. What is the best musical instrument for my hard-of-hearing child? Are harmonic-rich musical instruments better than ones with fewer auditory cues?

Intuitively, one would suspect that musical instruments that have a better bass response may be better for a child to learn than one with a greater high-frequency emphasis. But also, intuitively, a musical instrument that has twice the number of harmonics (possibly twice the number of auditory cues?) in a given bandwidth may be better than one with a sparser harmonic structure.

Since half-wavelength resonator musical instruments (such as the saxophone and stringed instruments) have twice as many harmonics in any given frequency range as do quarter-wavelength resonator instruments (such as the clarinet or trumpet), a saxophone may be better than a clarinet for hard of hearing children who may have better cochlear reserve in the lower frequency region. If it turns out that a more tightly packed harmonic structure provides a better sound for a hard-of-hearing person, then a saxophone may be better than a clarinet. Do twice as many harmonics mean twice as many auditory cues? Or would larger or longer bass musical instruments—regardless of how tightly packed the harmonic structure is—be better because there is more bass energy? Or perhaps both reasons contribute to the best musical instrument for the hard of hearing child?

Study 2: Is multi-channel compression a good idea for a music program?

While multi-channel compression has been quite successful for speech as an input to a hearing aid, it is not clear whether this holds true for music. Multi-channel compression inherently treats the lower frequency fundamental energy differently from higher frequency harmonic information, thereby altering the amplitude balance between the fundamental and its harmonics—a flute may begin to sound more like a violin or an oboe. Of course, although the exact frequencies of the harmonics and their associated amplitudes are quite important in the identification of a musical instrument, other dynamic factors, such as attack and decay parameters of the note, as well as vibrato, are also important factors for identification. The exact specifics of how poor the settings of multi-channel compression need to be before musical instrument identification or the change in timbre becomes noticeable or problematic would provide important clinical (and hearing aid design) knowledge.

Study 3: Is multi-channel compression less of an issue for some instrument groups than others?

Indeed multi-channel compression may be more problematic for some instrument groups than others. For example, when a violin player reports a “great” sound, they are referring to the amplitudes of certain higher frequency harmonics, and this helps to differentiate a beginner student model of violin from a Stradivarius. In contrast, a woodwind player, although generating a similar wide band response as a violinist with their instrument, relies more on the lower frequency inter-resonant “breathiness” for a proper tone. An interesting area of study would be to examine this potential problem, especially for stringed instruments, where harmonic amplitude changes may be more noticeable than for woodwind players. A hypothesis is that woodwind players would tolerate poorly configured multi-channel compression systems better than string players.

Study 4: Can we derive a Musical Preference Index like we have the SII for speech?

The Speech Intelligibility Index (SII), especially the aided SII, provides information regarding frequency-specific sensation levels for speech, but due to the variable nature of music, the development of a music analog of the SII—a Music Preference Index—may not be so straightforward. For example, when listening to strings, where the lower and higher frequency components equally increase as the music is played louder, then one fitting strategy may indeed point you in the correct direction. However, for more varied instrumental (and perhaps mixed vocal) music, such as orchestral or operatic music, a different approach may be required. One could conceivably work out a fitting formula (such as a “weighted average” or dot product) of adequate sensation levels across the frequency range for orchestral music based on the various energy contributions of each of the musical sections or perhaps another index value for folk. Such an aided Preferred Music Index or series of indices may provide a goal (or goals) for a fitting target.

Study 5: For music, would a single output stage and receiver be better than many?

Although it is true that the “long term” speech spectrum is wide band at any one point in time, the speech bandwidth is quite narrow when compared to music. Speech is either low-frequency sonorant (nasals or vowels) or high-frequency obstruent (consonants), but never both. In contrast, music always has low-frequency (fundamental) and high-frequency (harmonic) information at the same time. This has yet-to-be-determined ramifications for whether a single hearing aid output stage or receiver or multiple receivers—each specializing in their own frequency bandwidths—would be better or worse for speech versus music. A hypothesis is that amplified music should be transduced through a single receiver (to respond to its concurrent wideband nature), and speech, transduced through two or more receivers (with low-frequency and high-frequency information being treated separately). This hypothesis also has implications for in-ear monitor design, which adheres to a “more is better” approach rather than a “less is better” approach.

Study 6: Does the optimization of the front-end analog-to-digital converter for music also have benefits for the hard-of-hearing person’s own voice?

Although the level of speech at 1 meter is on the order of 65 dB SPL (RMS), the level of a person’s own voice at their own hearing aid microphone (which is much closer) is roughly 20 dB greater. And with crest factors for speech being at least 12 to 15 dB, the input level of their own voice can be close to 100 dB SPL.2 Does the resolving of the front-end processing issue for music with a more appropriate analog-to-digital converter also benefit hard-of-hearing consumers who wear hearing aids by improving the quality of their own amplified voice?

Study 7: Given cochlear dead regions, would gain reduction be better than frequency lowering, which alters the frequencies of the harmonics?

Frequency lowering in many of the various formats that are commercially available can be quite useful for speech, mostly to avoid over-amplifying cochlear dead regions that may be related to severe inner hair cell damage. Although this may be useful for speech, it does not follow that this would also be the case for instrumental music. A music note, such as C#, is made up of a well-defined fundamental frequency and a range of well-defined harmonics at exact frequencies. Anything that changes those frequencies can make the music sound dissonant. A “gain-reduction” strategy (such as a -6 dB/oct roll-off above 1500 Hz, for example) in the offending frequency region(s) may be the most appropriate clinical approach, rather than any alteration to the frequencies of the harmonics of the amplified music. Gain reduction simply reduces the energy in a severely damaged region of the cochlea but does not alter the frequency and, as such, may be accepted more readily by the hard-of-hearing musician or listener.

Study 8: Since “streamed music” has already been compression limited (CL) once during its creation, a music program for streamed music should have a more linear response than for a speech-in-quiet program.

Commercially available streamed music and mp3 audio files have already been compression limited in their creation. Providing additional compression to these files may be problematic due to the hearing aid program providing an unnecessary second round of compression. A hypothesis would be that for streamed music, the processing strategy should be more linear than the processing for a speech-in-quiet program. When listening to rock music that had already been compression-limited, subjects preferred a linear response.3 That is, as long as the music is sufficiently loud, then a “less may be more” approach may be useful. More research is required in this area and a study of the frequency dependence of the (adaptive) compressor knee-point with music would provide more information for the clinical audiologist.

Study 9: Research has found that with cochlear dead regions, people with high-frequency sensorineural hearing loss find that speech and music sound “flat.” Would people with a low-frequency loss find that speech and music sound “sharp?”

A study by Hallowell Davis examining subjects with unilateral high-frequency sensorineural hearing loss found that speech sounded flat in the damaged ear compared to their normal hearing ear.4 In this study, subjects were provided with two unmarked knobs—one controlled frequency and the other sound level. In the lower frequency region where both ears had normal sensitivity, there was a good one-to-one correspondence but in the area of sensorineural damage, the subjects found that the sound in the damaged ear sounded progressively louder but not higher pitched as the frequency was increased. That is, the subjects heard the sound as progressively “flat” in the damaged ear. This is the basis for frequency-lowering algorithms in hearing aids. Because of the way that Davis and his colleagues designed the study, this would be independent of input stimulus and, as such, would be equally applicable for speech and for music. This study can be replicated for those with a unilateral low-frequency sensorineural hearing loss, such as people with unilateral Meniere’s Syndrome, to confirm that sound appears to be “sharp” in the damaged region.

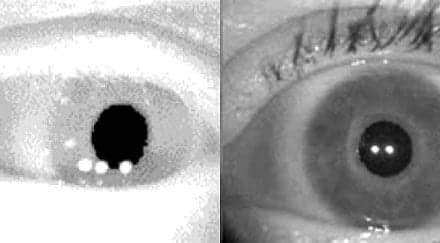

Study 10: Is there a more clinically efficient method than the TEN (HL) test which will only take seconds rather than 8-10 minutes to determine cochlear dead regions?

The Threshold Equalization in Noise test [calibrated for HL, TEN (HL)] was first designed in the 1990s and is clinically available on CD as well as a built-in option for some audiometers. The TEN (HL) test was designed to find cochlear dead regions, which should be avoided in any hearing aid fitting.5 But, the TEN (HL) test can take 8-10 minutes (for two ears and four test frequencies) which can be clinically problematic. In contrast, an alternative method is based on the Davis et al4 study that indicated that pure tones in a cochlear dead region sound flat relative to the normal hearing ear (or, more often, as the patient “remembered” music sounding). In this scenario, a person only needs to play adjacent notes on a piano keyboard and indicate where two adjacent notes do not sound like different pitches. This can also be viewed as a cochlear dead region that needs to be avoided in a successful hearing aid fitting (for speech and for music). The piano keyboard (which only requires a “same/different” judgment of pitch) only takes 20 seconds and can actually be used prior to a client coming to the clinic. A comparative study of these two methods of assessing cochlear dead regions would provide for an interesting project, and this work can be translatable into the busy clinical environment.HR

Marshall Chasin, AuD, is an audiologist and the director of auditory research at the Musicians’ Clinics of Canada, adjunct professor at the University of Toronto, and adjunct associate professor at Western University. Correspondence to: [email protected].

References:

- Chasin M. Music and Hearing Aids. Plural Publishing; 2022.

- Cornelisse LE, Gagné JP, Seewald RC. Ear Level Recordings of the Long-Term Average Spectrum of Speech*. Ear and Hearing. 1991;12(1):47-54. doi: https://doi.org/10.1097/00003446-199102000-00006

- Croghan NBH, Arehart KH, Kates JM. Quality and loudness judgments for music subjected to compression limiting. The Journal of the Acoustical Society of America. 2012;132(2):1177-1188. doi: https://doi.org/10.1121/1.4730881

- Davis H, Morgan CT, Hawkins JE, Galambos R, Smith FW. Temporary Deafness Following Exposure To Loud Tones and Noise. The Laryngoscope. 1946;56(1):19-21. doi: https://doi.org/10.1288/00005537-194601000-00002

- Moore BCJ, Huss M, Vickers DA, Glasberg BR, Alcántara JI. A Test for the Diagnosis of Dead Regions in the Cochlea. British Journal of Audiology. 2000;34(4):205-224. doi: https://doi.org/10.3109/03005364000000131

:). I will mention to my kids that you called me a hero!

Dr Chasin is a hero! Music has for so long been the poor relation, and that’s evident in the restricted testing regime of most clinics, the speech-dominated training of practitioners (UK) – not to mention the design of many a hearing aid. Yet, quite apart from its recreational value, music enhances neural pathways that are involved also in speech. And a good quality hearing aid does a better job of that, too. So, yes, please go on waving the flag, bring on the more subtle tests!