Part 2: Where will DSP take the industry and hearing health care?

This article presents the views of eight industry experts, including Christian Berg, director of worldwide research and development for Phonak; Victor Bray, vice president of auditory research for Sonic Innovations; Francis Kuk, director of audiology for Widex Hearing Aid Co; Pierre Laurent, director of product marketing for Intrason France; Giselle Matsui, director of product management for GN ReSound; Thomas Powers, director of audiology and strategic development for Siemens Hearing Solutions; Jerome Ruzicka, president of Starkey Laboratories; and Donald Schum, vice president of audiology for Oticon Inc. As with any speculation on the future, it was recognized during these interviews that their comments represented “best guesses” pertaining to the present and future directions of digital instruments, and they were encouraged to provide candid and even wild ideas on what might be expected in the next 10 years and beyond. |

The January 2002 issue of HR contained Part 1 of this article, which focused on the evolution of digital signal processing (DSP) technology in hearing instruments, including the magnitude of advancement that DSP technology represents relative to analog programmable instruments, hearing-in-noise issues, and the development of new audiological rationales. Part 2 looks at the future of digital instruments: how they will continue to change the industry and what they are likely to look like in the future.

DSP Changes the Industry

Digital technology has changed the industry in fundamental ways almost on all levels. When Oticon and Widex introduced the first digital ITEs in 1996, programmable instruments made up 12.5% of the market.1 With the introduction of DSP instruments, the use of both programmable and digital instruments skyrocketed, possibly due to the much more defined “good-better-best” product and price stratification. Today, digital programmable instruments are 31.8% of the market, while digital aids make up 27.2%—together accounting for about three-in-five of all hearing aids dispensed. Perhaps more importantly from a dispensing standpoint, they now account for almost three-quarters (74.3%) of the gross revenues received by the average dispensing office/practice.2

This dispensing sea-change has had a profound impact on hearing industry structure. Because the cost of R&D and distribution of digital aids (including software development, dispenser training, and education) can run as high as $20-$30 million, industry manufacturers have been forced to place great emphasis on their economies of scale. This has spawned a 2+ year consolidation movement, the likes of which the industry has never seen.

The conventional wisdom in the industry is that more consolidation among manufacturers is likely because manufacturing/distribution costs continue to increase and competition continues to intensify. But there is also the possibility that digital technology may be opening doors for new entrants. “DSP may actually start to drive industry expansion,” says Jerry Ruzicka of Starkey. “I think the cost of entry at the hardware level is rapidly changing. Traditionally, the cost of entry into the digital market has been very high. However, the geometries that we use today are actually leveling off so we can get multiple products out of the upcoming digital platforms. I think the technology is moving into a more mature phase, and as it does, it means that more products can be developed more rapidly. I think more general purpose products will be introduced to the market, and I’d suggest that this will result in an expansion, because the technology simply won’t be unique anymore.”

Ruzicka suggests that the average retail cost of digital instruments will also continue to decline, and several of those interviewed said that the declining price of DSP instruments will open the market to a new group of consumers. “The higher price of DSP hearing instruments in the US market leads to too many expectations,” says Pierre Laurent of Intrason. “Often the price difference between an analog or programmable and a digital is simply not worth it in the eyes of some consumers. In France, the price scale between analog and digital is much less than in the US.” Laurent points out that the market in France is 82% digital, and he suggests that the same trend may occur in the US.

Few would disagree with Laurent’s point that, as the price of digital comes down, the technology will eventually come to dominate the market—if not completely overtake it. Unfortunately, the relatively small size of the hearing industry and the cost of developing a DSP instrument has handcuffed manufacturers. Donald Schum of Oticon points out that the industry can be viewed as being in a necessary evolutionary phase in which the core technology found in any given digital instrument needs to provide “all things for all people.” He likens this approach to crash-carts found in emergency rooms: “If you go into the operating room to deliver a baby or have a minor surgery,” says Schum, “there is a lot of expensive equipment, like crash-carts, respirators, etc, that are designed to save lives. Although you’re paying for this equipment to be there, it’s fairly unlikely that you’ll use any of it during your procedure. This equipment is not used unless it really has to be used. Likewise, in many ways, the processing capabilities and features included in digital products can be viewed from this standpoint: there are a lot of possibilities and potentials for addressing any number of hearing losses and individual situations. Any given patient isn’t going to use the majority of the technology within that product, but it’s still all there because you don’t necessarily know beforehand what might be needed in the long run to make that fitting a complete success.” Schum says that, as digital instruments evolve to true open-platform systems and diagnostic procedures become more refined, some of these unnecessary built-in features may be avoided.

|

DSP Directional Instruments

Of the 44 digital instrument lines available in 2001, over half (24) of them offered at least one directional model/option in the line. Today, most digital instruments coming to market have some provision for directionality. “The fact is that, whenever we discuss digital processing, I think we now need to discuss it in terms of directional microphone processing, as well,” says Tom Powers of Siemens. “These two technologies will be used in conjunction to show overall hearing improvement. Until we find a way to separate useful speech signals from speech noise, DSP will continue to something of a backseat to directional technology. Without question, the convergence of DSP and directional microphone technology certainly is going to be a large factor for all manufacturers’ product development.”

“There have been a number of generations of directional instruments,” says Christian Berg of Phonak, “and the first generation was not a success because the user couldn’t switch it on and off…What we have seen in the later directional systems, is the formation of different directional patterns or selecting the areas that you want to emphasize, and we believe this can be developed even further. Some companies have come up with systems that can change the polar pattern of the device, and we have come up with a way of doing this adaptively. I think you’ll see directional systems continue to become more intelligent. Additionally, DSP lends itself to adding delays, as well as phase and time information.”

One interesting question is if a digital algorithm might ever be able to replicate or replace the function of a directional microphone. According to Francis Kuk, this is highly unlikely: “Digital cannot duplicate the functions of a directional microphone. They are two different things. Digital technology involves picking the sounds that a transducer—whether it’s an omni-directional or several different microphones—has provided and manipulate the signals to result in a directional pattern best suited for the wearer. From that standpoint, digital itself still requires more than one microphone [for enhancing directionality].

“I’m not so sure that directional microphone technology is actually making a huge impact on DSP technology per se,” continues Kuk. “Instead, what is happening is that DSP technology is influencing the development of directional instrument technology. The directional microphone works when you have at least two openings [ports] on the faceplate, and it works on the delay of the signals between the microphones. From that standpoint, the directional microphone isn’t really influencing DSP. However, because we digitally manipulate the signals, it results in different delays between the microphones. We can provide adaptive directionality that is seen in several products, including ours, and we can also use digital technology with noise reduction, for example, to ascertain what kind of noise is present in the environment and identify things like wind noise, or circuit noise, or speech sounds—and, accordingly, have the microphone change its polar pattern adaptively…Without digital technology, this would be impossible to implement.”

“Directional microphones have been proven to improve hearing in noise,” says Ruzicka. “I think that, by using DSP hardware, we can do some things with directional systems that basically we have no control over in the analog world. I think these systems will play a large part in the future, although size is always a limiting factor. In terms of a BTE type product, I think directional systems are quickly become the standard.”

Knowles MarkeTrak articles indicate that a key feature of customer satisfaction involves the ability to hear better in noise. “In order for us to look at what the consumer wants,” says Powers, “we need to look at speech noise and that’s the domain of directional microphone technology. The industry has a tremendous tool in combining directional mics—a technology that has been around since about 1971—with digital technology. If the patient is out in the real world and encounters any type of noise, whether it’s speech or environmental noise, the digital directional product stands a good chance of providing them with at least some improvement in the ability to use the system. I’m careful not to say they’ll hear better or experience improved speech intelligibility, because what the system may actually be doing is improving comfort or it may be reducing overall noise levels. While the client’s speech intelligibility may not be improved significantly, they’re hearing enough to carry on a conversation while being more comfortable and being less fatigued by annoying sounds.”

Victor Bray of Sonic Innovations believes that a harbinger of future results and potentials for directionality and DSP can be seen in a recent clinical study (see Bray and Nilsson, December 2001 HR) that demonstrates a 2-3 dB SNR benefit from the core signal processing (multi-channel compression), 1 dB SNR benefit from digital noise reduction, and 2-3 dB SNR benefit from directionality. “There is an additive effect of these three features,” says Bray, “leading to a summed benefit of 6 dB SNR for the binaural, digital, directional hearing aid as a system.”

Fitting Software and the Future

As mentioned in Part 1 of this article, the distinct manufacturing fitting strategies and audiological rationales are coming to define digital hearing instruments moreso than the hardware contained within the devices. Correspondingly, the importance of fitting software for the acceptance of a product by hearing care professionals has become absolutely crucial in the new digital age.

“The programming software and the fitting rationales are 80% of the commercial success of the product,” says Laurent. “Fitting DSP instruments will not become more complicated; in fact, we are working on keeping the software easy, as it is a key for the success of a product line. At the same time, we offer more possibilities for those who want to modify a greater number of parameters. Giving the right tools to test the efficacy of the fitting is also a part of the system we are developing.”

“The software interface for professionals has become much more intelligent,” says Ruzicka. “In the last decade, we were teaching about compression, the setting of compression ratios and kneepoints, and number of channels, and I don’t think those necessarily contribute to a better fitting per se; it’s the algorithms that are established at the research level that have to be implemented through a user interface which is easy to use. This requires that the patient be fit to a high degree of accuracy, and what is left is only a matter a fine tuning…In these cases, the user interface is very important, but it’s really the algorithm behind the instrument.”

Those interviewed generally agreed that the audiological reasoning behind programming is more complicated, by necessity, than 5-6 years ago because the breadth of possibilities in the products are greater. It does take a greater investment of mental time and energy on the dispenser’s part to be familiar with the capabilities of each product from each manufacturer and to make those products work well for the client. But, at the same time, the quick fitting and guided fitting software components are getting better industry wide.

“There are features and aspects in all DSP lines that could be controlled from the user interface but are not because the fitting would become too complicated,” says Powers. “If you look at any of the DSP products on the market, there may be 100-150 different parameters that could be controlled by the dispensing professional—but adjusting these parameters might necessitate a fitting time of 3 days. So those parameters need to be collapsed into a practical, manageable user interface. And even the 15-30 controls that are generally accessible and controlled via the fitting software provide a level of complexity that not only requires education on the part of the dispenser, but can challenge anyone. All of the software in the industry uses a first-fit mode where the parameters are set based on audiometric information. But for 15%-30% of patients, there will be adjustments required, which means you might have to make changes that affect the 15-30 controls that do exist—and to do this, you need to understand what these controls do, and how they may influence other parameters and other aspects of the fitting.”

“Today’s advanced technology hearing instruments can achieve a good fitting “out of the box” [eg, based only on the puretone audiogram] about two-thirds of the time,” says Bray. “However, to extract the maximum benefit, the multi-channel gain and output settings need to be configured and fine-tuned to individual sensitivity and loudness functions across multiple frequencies for each ear. The clinicians who have high success rates are those who know the product and understand how to use the software system. Looked at in that light, the clinician and programming system actually account for 100% of the product’s success.”

“The lesson that the industry has learned is that, when you have a simple, intuitive fitting system, people will accept it,” says Kuk. “Most manufacturers now have a portable version of their programmer. Many systems now are capable of programming a hearing instrument with five to 10 keystrokes. I think the software will necessarily need to remain simple and intuitive from the clinicians’ point of view, but the complexity of the processing or algorithms behind the device will become much greater…However, instead of doing five things in the programming and remembering everything that you’ve learned in school to fit the patient properly,” continues Kuk, “there may come a day when you will just input the patient’s information and the hearing aid itself will make the necessary adjustments.”

Interactive fittings are also likely to play a large role in the future of all types of hearing instruments. “We believe that fitting software will continue to become more interactive and involve the patient’s lifestyle,” says Matsui. “The patient will almost program the hearing instrument themselves by working their way through virtual environments that simulate everyday situations. We also believe that hearing instruments will become more intelligent by providing the dispenser with more information to allow them to fine-tune and troubleshoot hearing instrument fittings. Bray agrees: “The next big step is to involve the patient into the fitting process, using automated software to configure and fine-tune the many digital hearing aid parameters,” he says. “This will be done with audio presentation of signals and the visual presentation of questions, then using patient responses to manipulate the hearing aid settings.”

Future DSP Devices

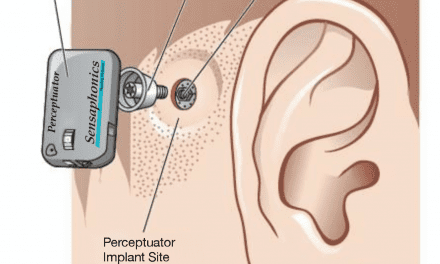

One of the most exciting opportunities for digital technology involves conjunctive DSP, or the ability to analyze digital signals from the right and left ears, compare and contrast those signals through sophisticated algorithms, then transmit the processed signals back to the listener in ways that maximize speech intelligibility or reduce noise. Binaural hearing instruments that “talk to each other” may also involve the use of an external processing unit that resembles a pager or cell phone clipped to a belt or shirt.

“We’ll see the miniaturization of FM, and we’ll see pairs of hearing aids communicating with each other in a wireless function,” says Schum. “Certainly a goal of the industry will be to have a set of CICs that are communicating with each other in real time and are able to perform binaural processing algorithms that should improve speech-in-noise and localization issues. This is really something that professionals can start thinking about on the horizon, and may necessitate all of us to think more about in terms of fitting hearing instruments as one customized fitting for an individual’s needs rather than individual fittings for each ear.”

Some of those interviewed say that these types of instruments could represent a major technological innovation for two reasons: 1) An external digital processing unit could utilize a stronger power source, providing more sophisticated calculations (and, perhaps, paving the way for truly open programming systems); and 2) The resulting types of binaural amplification algorithms could more closely consider how the “brain” processes sound as opposed to fitting one aid to an individual ear. While a pager-type instrument could be a logical next-step in digital hearing instrument evolution, as the processing power of chips grows, these hearing instruments would likely return to being at-the-ear level devices. “Ideally, the processing for such a unit would all be done on-board without the need for an external unit to accomplish the task,” says Schum. “The pager-type device would be a transition stage—and possibly a long transition stage—until all the desired processing could move back into a completely ear-level device.”

In terms of FM listening systems being incorporated into digital aids as a standard feature, the problem lies not with technology but with the relatively small hearing instrument market. “We believe that the future will allow us to combine wireless communication technology with hearing instruments,” says Matsui. “This will allow us to implement powerful signal processing strategies which can improve communication in a wide variety of environments.”

“Many have suggested that we merge FM listening technology in a hearing aid as standard,” says Berg. “At this point, the cost issue for making such an FM receiver is not justifiable, because the consumer will end up carrying around a costly system that many won’t need or won’t use. Or the manufacturer will need duplicate product lines, offering instruments that have the FM capabilities and those that do not, again increasing manufacturing and consumer costs.“ Berg points out that the Claro instrument has an optional integrated FM system.

Although the above technologies may represent large steps in the evolution of digital instruments, the smaller, “less sexy,” incremental improvements in algorithms and automatic features may end up yielding a bigger overall impact on customer satisfaction. “I think that big leaps are not likely on things like noise cancellation algorithms; instead the researchers will continually develop and refine better systems,” says Berg. “All the manipulation of sound in amplification and compression will get better, but there will be no true paradigm shift involved in this. Where I do see a paradigm shift occurring is that DSP itself is starting to address those things that lie outside of the two A/D converters. There is a lot occurring before the signal gets to the microphone, and there is a lot happening prior to the signal entering the internal auditory system. We have seen that, just by having the earmold mechanics under greater control, it provides the data and the ability to combine information that improves DSP function”

The automatic functions of digital aids will also increase. “Ease of use for the patient means more automatic functions, less reliance on VCs, more automatic changing of gain, directional patterns, and a combination of these functions in a more intelligent way than are offered by even today’s best products,” says Powers.

Schum cites the improvements made recently in the area of hearing aid side-effects. “I think you’ll see better feedback and occlusion algorithms implemented, so that these types of problems will essentially become an old notion about past generations of hearing aids. Hearing aids will eventually not have any feedback, and patients won’t be required to have closed fittings. Even today we’re seeing improvements toward this goal.”

Bray concurs: “There will be relatively smaller, but very important, steps that help consumers in problematic areas. For example, in 1998, we found that we could minimize the occlusion effect by a narrow-band, low-frequency adjustment of the hearing aid output. Other companies are also using this approach or approaches similar to it.”

“There will be new features and technologies that are going to make a tremendous impact on the future of DSP performance,” says Ruzicka. “The main thing is that we can provide hearing instruments with some level of intelligence, and that hasn’t been available before. Additionally, I have to believe that some of the leading-edge systems of the future will be done on an open or flexible platform.”

References

1. Hearing Industries Association (HIA). Quarterly statistics report. Alexandria, Va: HIA; October 2001.

2. Strom KE. Slouching into the new millennium: 2001 brings little market growth to the hearing industry. Hearing Review. 2002;9(3):14-22.

3. Sammeth C. From vacuum tubes to digital microchips. Hearing Instruments 1989;40(10): 9-10, 12.

4. Sammeth C, Levitt H. Hearing aid selection and fitting in adults: history and evolution. In: Valente M, Hosford-Dunn H R Roeser, eds. Audiology Treatment. New York: Thieme Publishing; 2000: 213-257.